Climate Change Science Essay

by Ken GregoryINTRODUCTION

A goal of the Friends of Science Society is to educate the public about climate science and the scientific merits of the hypothesis of human induced global warming. The science of climate change is complex. Unfortunately, politics and the media has affected the science. Climate research institutions know that they must present scary climate forecasts to receive continued funding - no crisis means no funding. The media presents stories of climate disaster to sell their products. Scientific research that suggests climate change is mostly natural does not receive much if any media coverage. These factors have caused the general public to be seriously misled on climate issues resulting in wasteful expenditures of billions of dollars in an ineffective attempt to control climate. This document provides an overview of climate change issues as determined by a comprehensive review of the state of climate science.

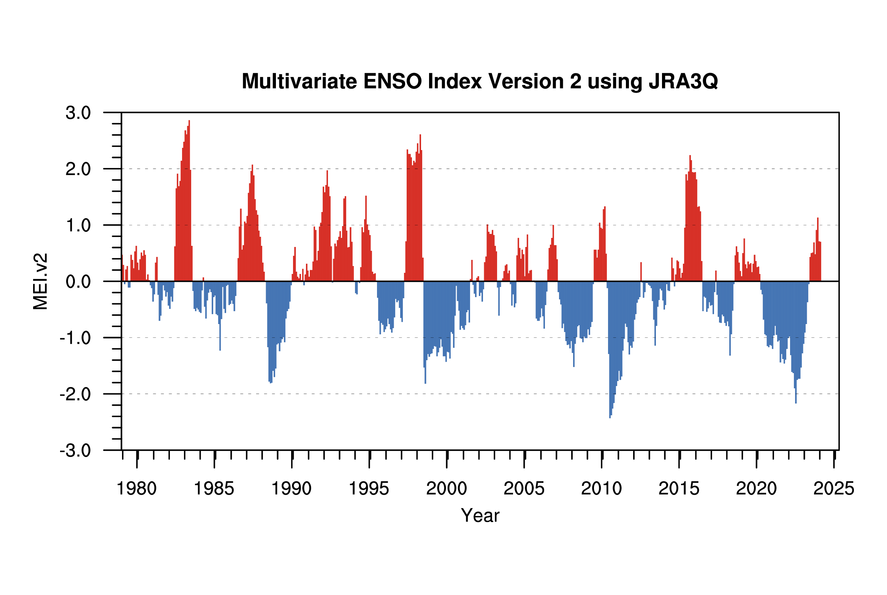

The graph above shows the temperature changes of the lower troposphere from the surface up to about 8 km as determined by the University of Alabama in Huntsville (UAH) satellite data. The best fit line (dark blue) from January 1979 to April 2026 indicates a trend of 0.16 °Celsius/decade. The sharp temperature spikes in 1998, 2010, 2016 and 2023 are El Nino events. Surface temperature data is contaminated by the effects of urban development.

The Happa Tonga-Hunga Haʻapai underwater volcano also contributed to the high temperatures from 2023 to 2025 by the ejection of a large volume of water vapour that increased the concentration in the stratosphere by about 10%. This caused part of the global temperature rise in 2023.

The Sun's activity, which was increasing through most of the 20th century, has recently become quiet. The magnetic flux from the Sun reached a peak in 1991. The high magnetic flux reduces cloud cover and causes warming. Since then the Sun has become quiet, however it continues to cause warming for a few decades after its peak intensity due to the huge heat capacity of the oceans. The data are obtained from microwave sounding units (MSUs) on the National Oceanic and Atmospheric Administration's satellites, which relate the intensity or brightness of microwaves emitted by oxygen molecules in the atmosphere to temperature. The MSU data set represent the temperatures of a layer of the atmosphere that extends from the surface to approximately 8 kilometres (5 miles) above the surface. The dark red line is the 5-yr centered average of climate models' lower troposphere. The model trend is 172% of the measurements. The UAH UHIE corr (light blue) line is the Urban Heat Island Effect corrected trend based on this study which gives a correction of -0.017 °C/decade.

The Science In Summary

The history of the Earth tells us that the climate is always changing; from warm periods when the dinosaurs flourished, to the many ice ages when glaciers covered much of the land. Climate has always changed due to natural cycles without any help from people.

The United Nations Intergovernmental Panel on Climate Change (IPCC) is a political organization promoting a theory that recent minor temperature increases may be caused largely by man-made carbon dioxide (CO2) emissions. CO2 is an infrared gas, and increasing concentrations can potentially increase the average global temperature as the gas absorbs long-wave radiation from the Earth and emits the absorbed energy. However, the warming ability of CO2 is limited because much of the absorption spectrum is near saturated. When CO2 concentrations were ten times greater than today the Earth was in the grips of one of the coldest ice ages. The climate system is dominated by strong negative feedbacks from clouds and water vapour which offsets the warming effects of CO2 emissions.

The history of climate and CO2 concentration shows that temperature changes precede CO2 changes and cannot be a major driver of climate. Temperature changes over different time scales have been well correlated to solar cycles, cosmic ray flux and cloud cover. Recent research shows that cosmic rays act as a catalyst to create low clouds, which cool the planet. When the Sun is more active, the solar wind repels the cosmic rays, reducing low cloud cover allowing the Sun to warm the planet.

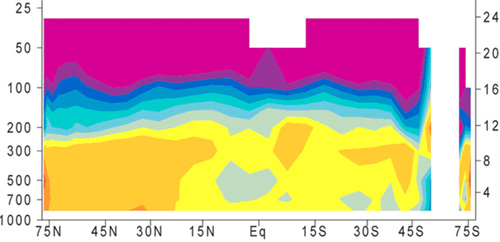

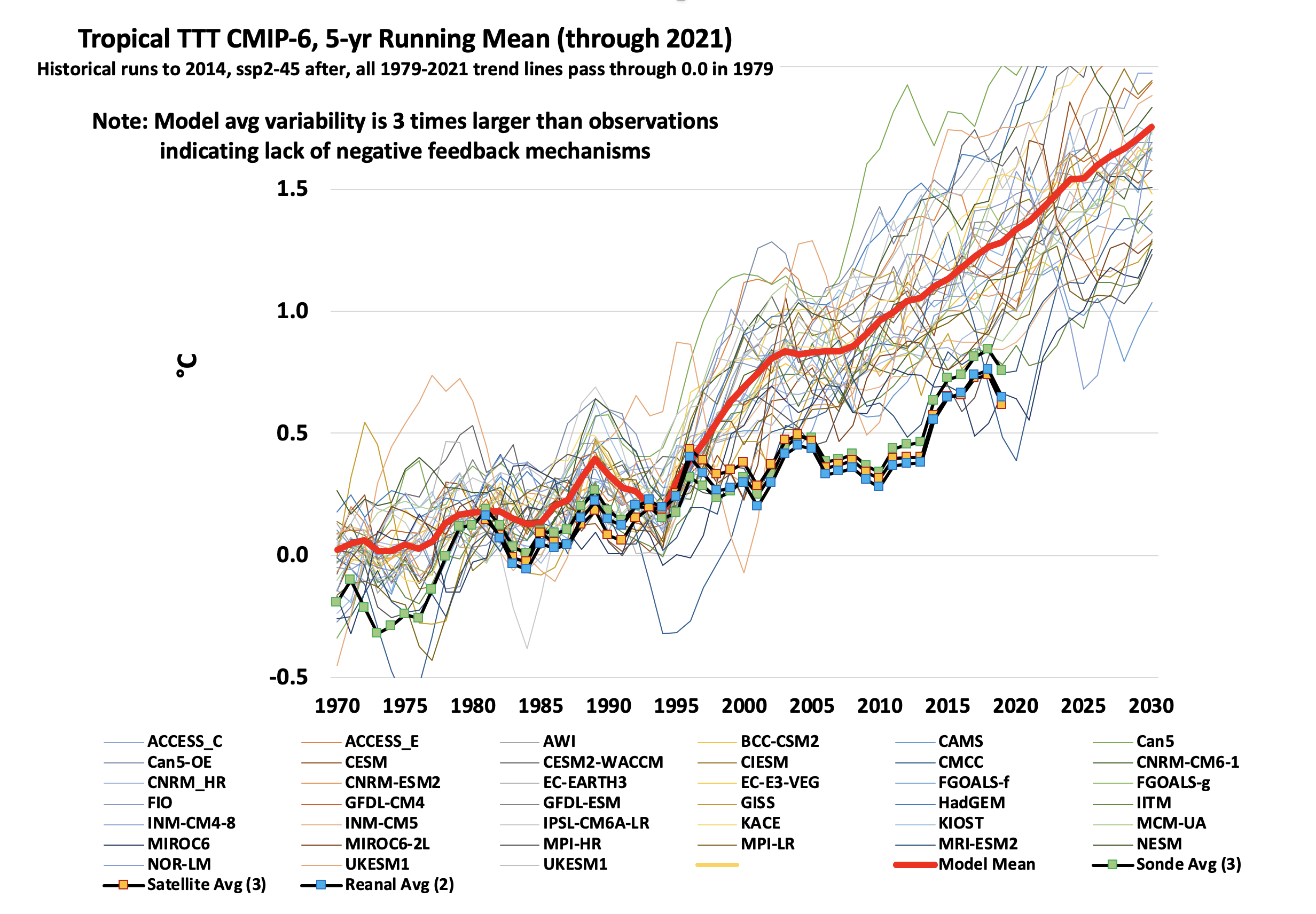

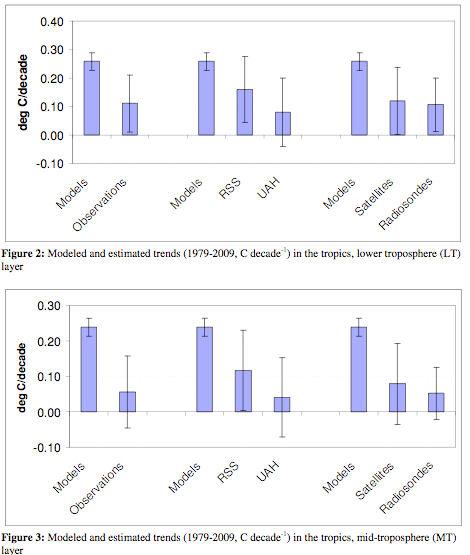

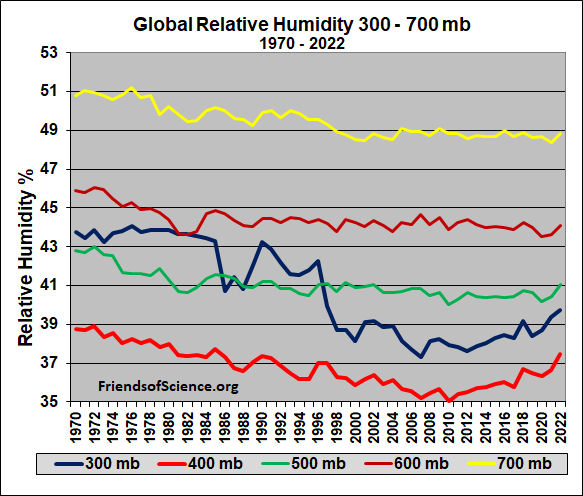

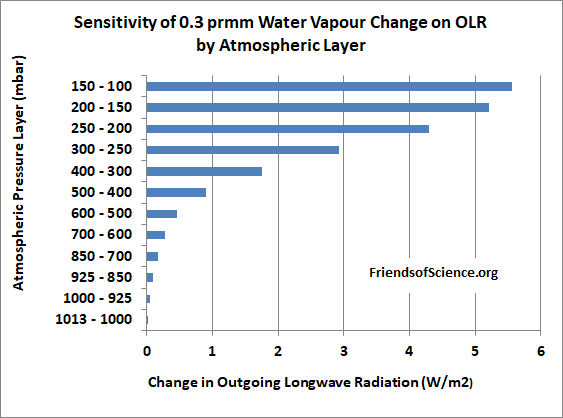

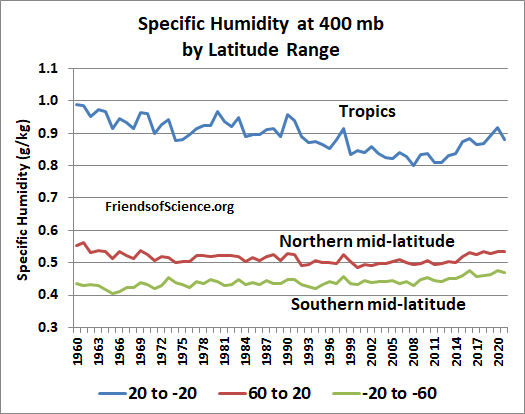

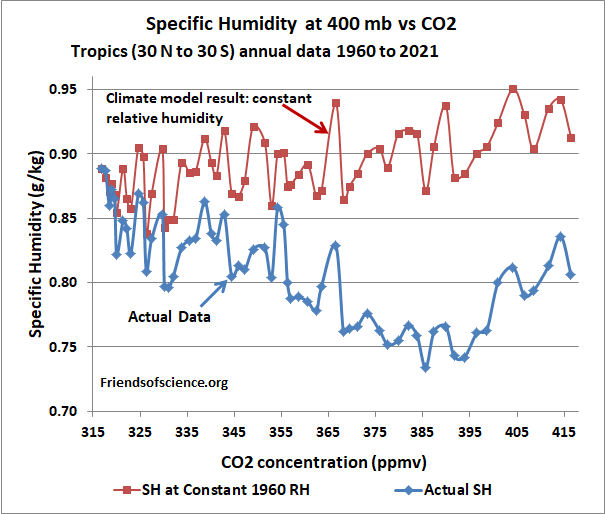

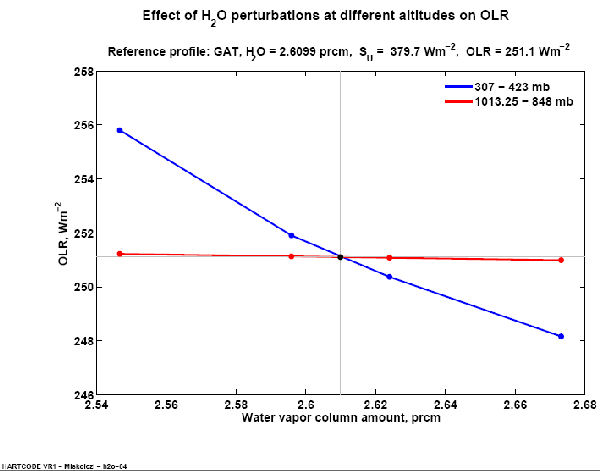

Computer model results presented in the IPCC Fifth Assessment Report predict that global warming will cause a distinctive temperature profile in the atmosphere of enhanced warming rate in the upper atmosphere at 8 to 12 km altitude over the tropics. The predicted temperature profile is the result of an expected increase in water vapour in the upper atmosphere which would amplify a CO2 induced warming three fold. The computer models are programmed to forecast a constant water vapour relative humidity with increasing CO2 resulting in a large water vapour feedback. Actual temperature data shows no such enhanced warming profile. Therefore, the comparison of observed data to computer models proves that no such water vapour induced warming amplification exists, so CO2 is not the main climate driver. In atmosphere layers near 8 km, the modelled temperature trend from 1980 is 200 to 400% higher than observed. Weather balloon data shows that specific humidity has fallen 9% since 1960 in the upper troposphere (400 mbar pressure level) where the models predict the greatest feedback. Adding CO2 to the atmosphere may reduce upper atmosphere water vapour, the most important greenhouse gas, resulting in only a small increase of the greenhouse effect.

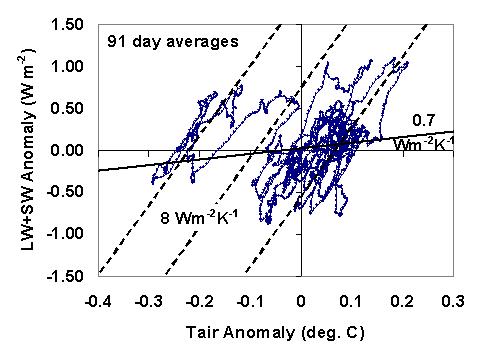

An analysis of satellite data shows that clouds cause a strong negative feedback on temperature, but climate models assume that clouds cause a positive feedback. Modellers assumed that all cloud changes are caused by temperature changes which results in them inferring a positive feedback. But changing cloud cover can also cause temperature changes. Scientists can now separate these two effects. The correct analysis shows that clouds cause a strong negative feedback, so if temperatures increase, cloud cover increases, reflecting solar energy back to space and greatly reducing the warming effect of CO2 emissions.

Several planets and moons have warmed recently along with the Earth, confirming a natural sun caused warming trend. Over longer time periods, as the solar system moves in and out of the galactic arms the cosmic ray flux changes, causing ice ages and warm ages. A comparison of temperature and solar activity proxy data suggests that solar effects can explain at least 75% of the surface warming during the last 100 years.

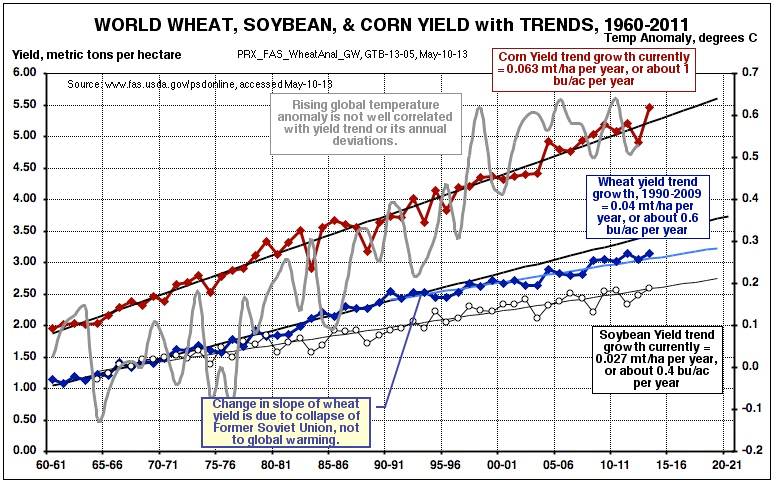

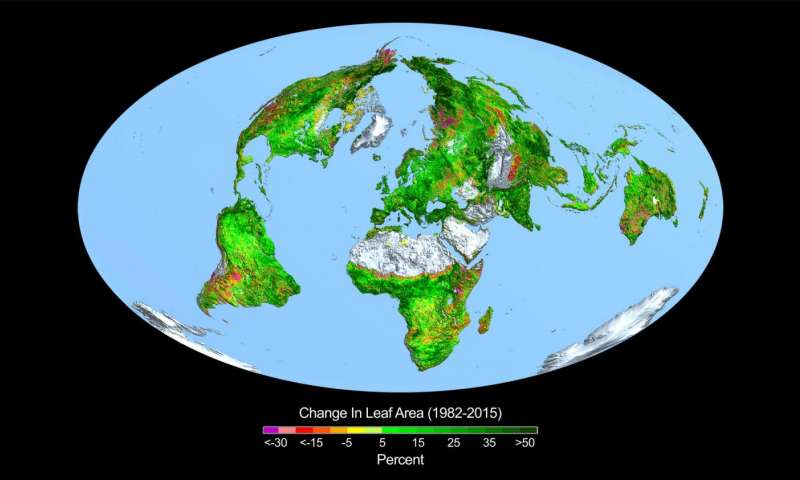

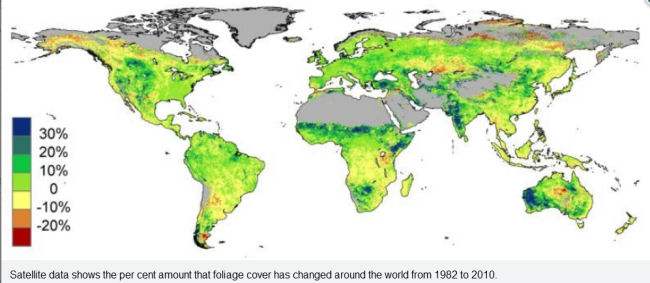

CO2 is plant food and the increase in the CO2 concentration may have increased the global food production by 15% since 1950 resulting in huge benefits for people. For Canada, any CO2 warming effect would also benefit us by reducing our space heating costs and making a more pleasant climate.

The IPCC predicts that global average temperatures will increase by 0.17 to 0.38 °C per decade to the end of the century depending on the rate of CO2 growth in the atmosphere and other assumptions. The projections assume that no action is taken to limit CO2 emissions. However, these predictions are unrealistic because they falsely assume that the recent temperature changes are driven solely by CO2 and that the Sun has little effect on climate. A recent study of past climate change used by the IPCC has been shown to be wrong due to the use of a faulty algorithm, and the inappropriate selection of data.

The land temperature record is contaminated by the urban heat island effect. Fully correcting the land temperature record would reduce the warming trend from 1980 to 2002 by half. The IPCC historical CO2 record may be incorrect due to inappropriate adjustments to the ice core data, and ignoring direct historical CO2 measurements. The IPCC selects and adjusts data to conform to its CO2 warming hypothesis and ignores alternative climate theories. This is the wrong way to do science. Many scientists strongly disagree with the IPCC conclusions.

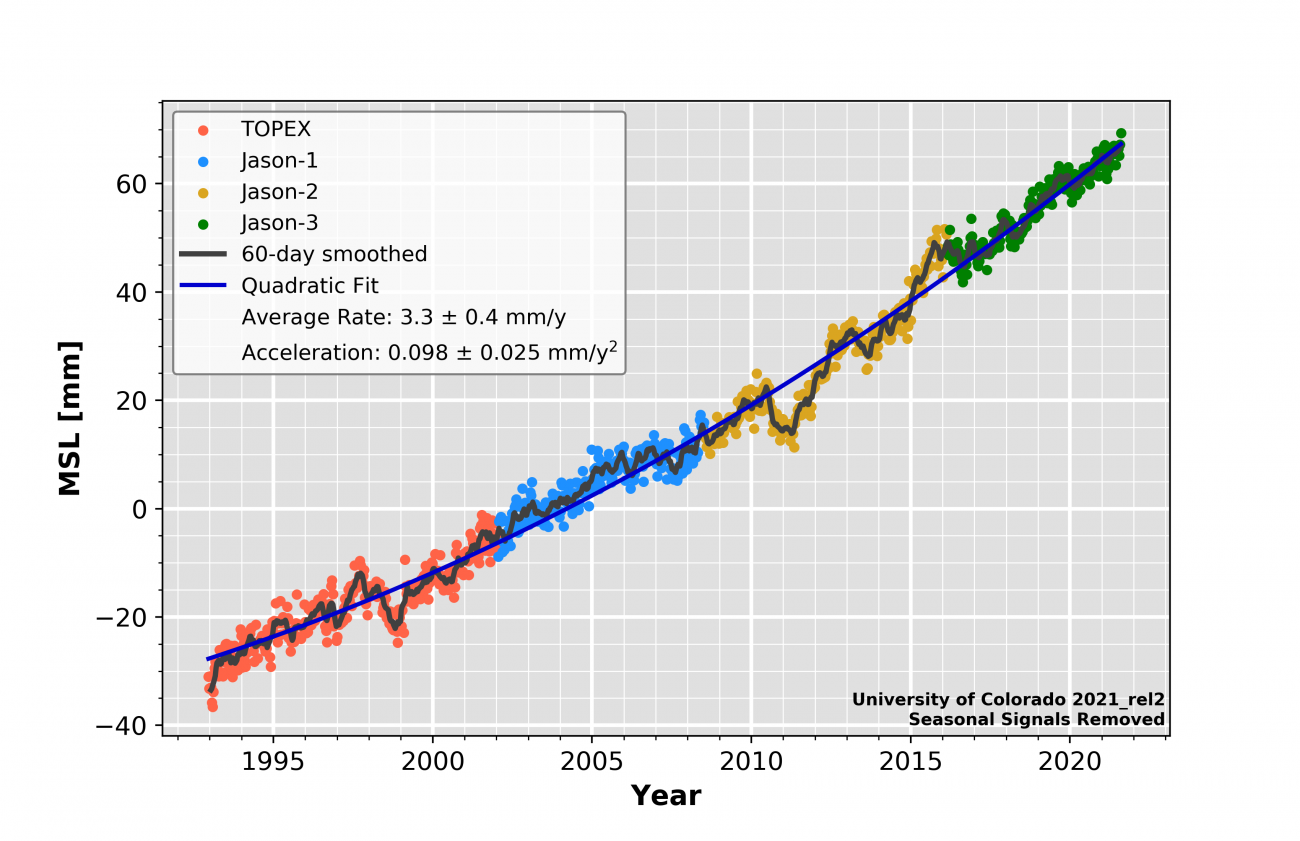

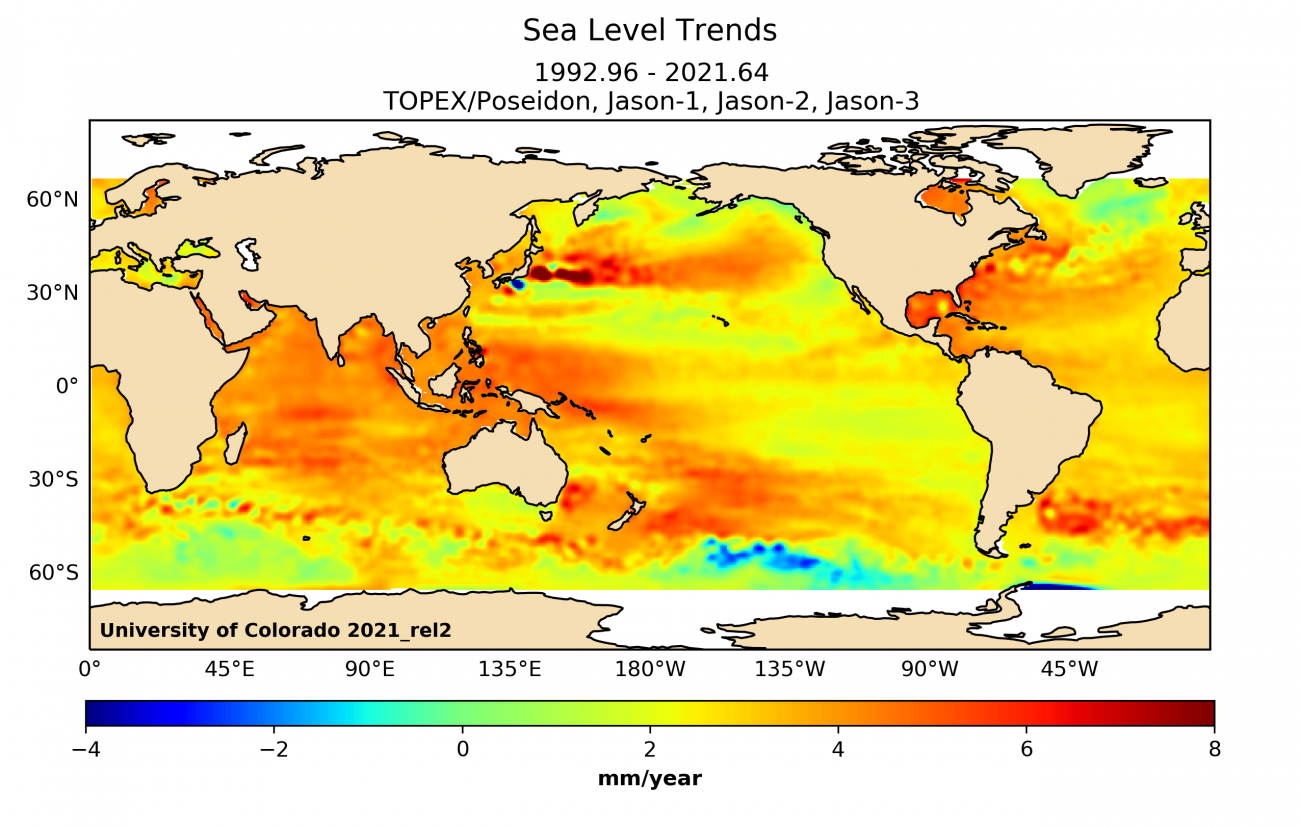

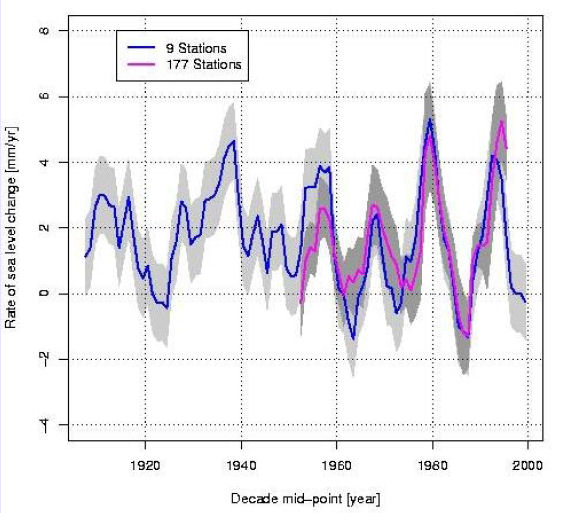

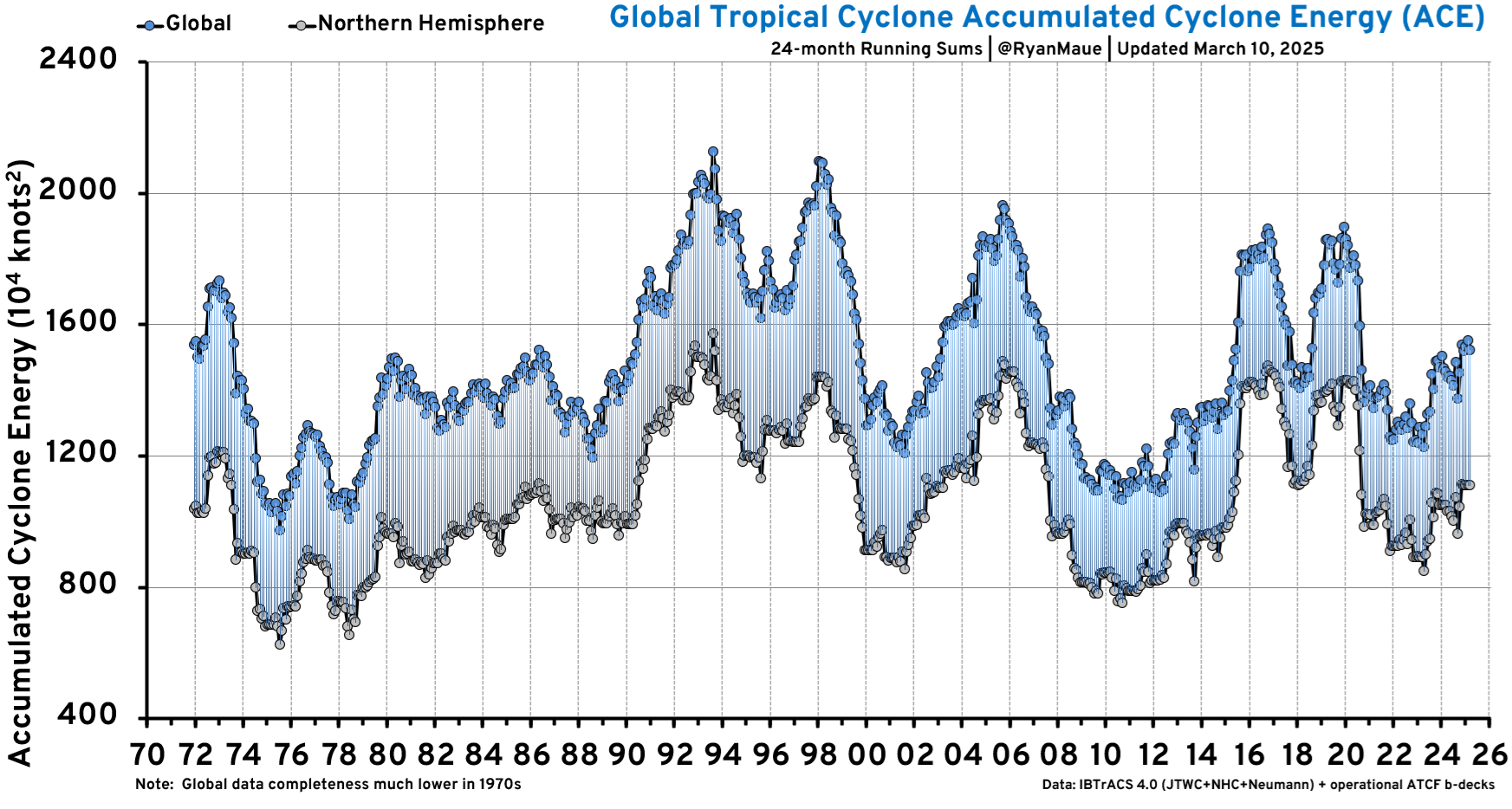

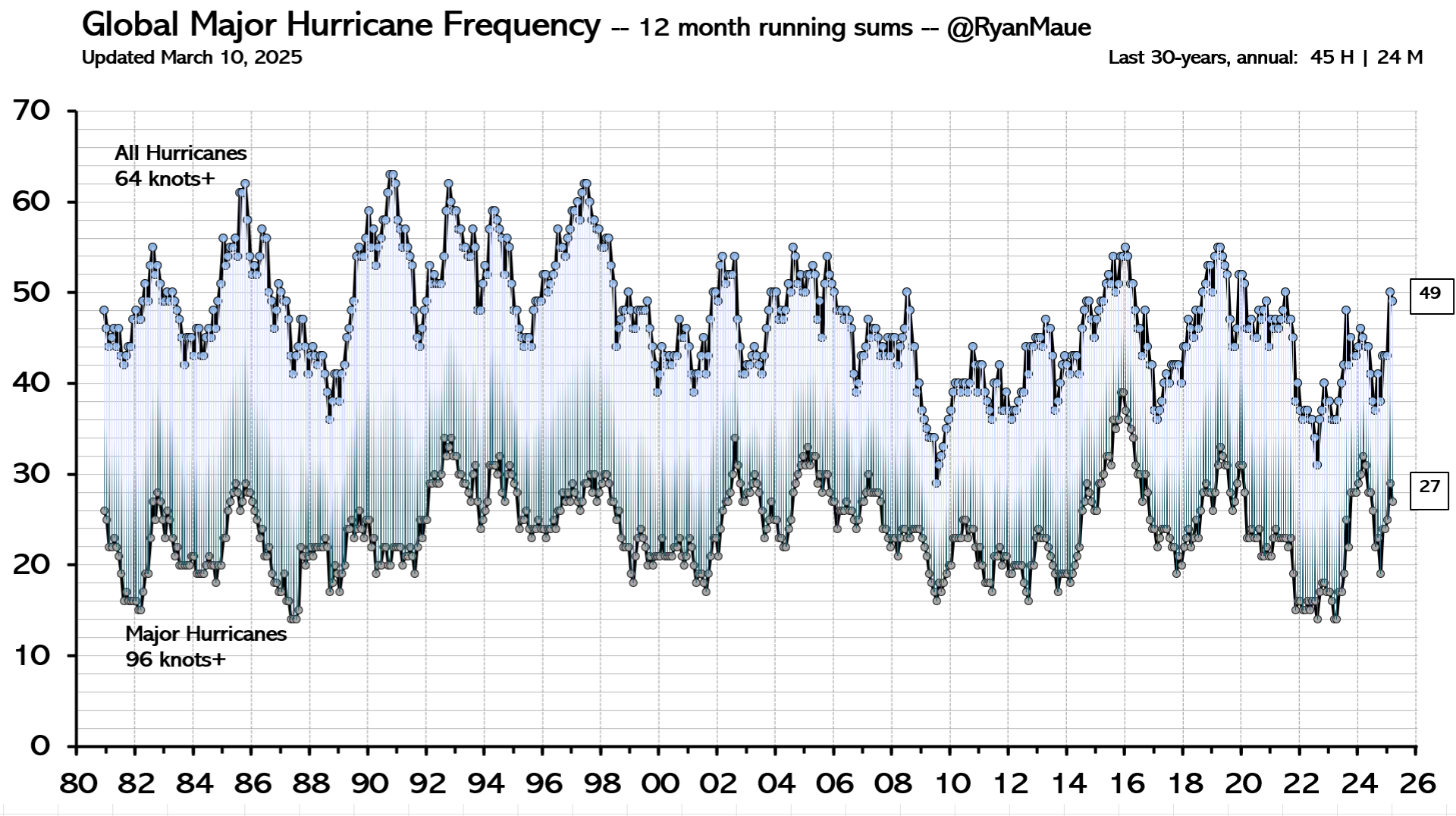

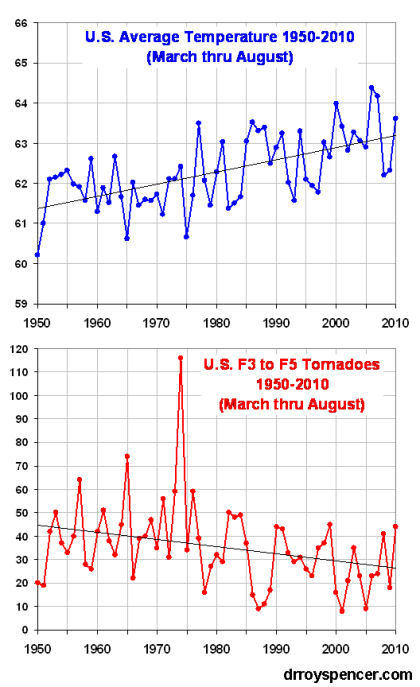

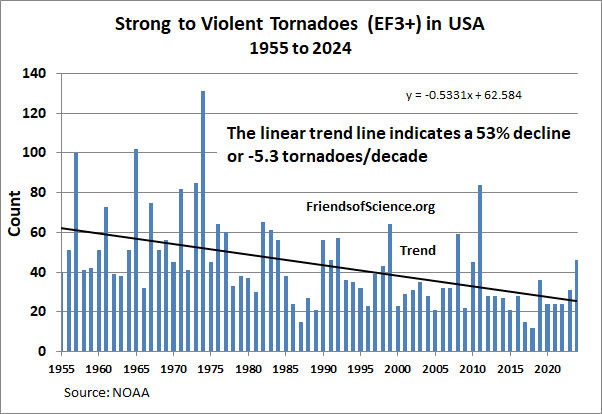

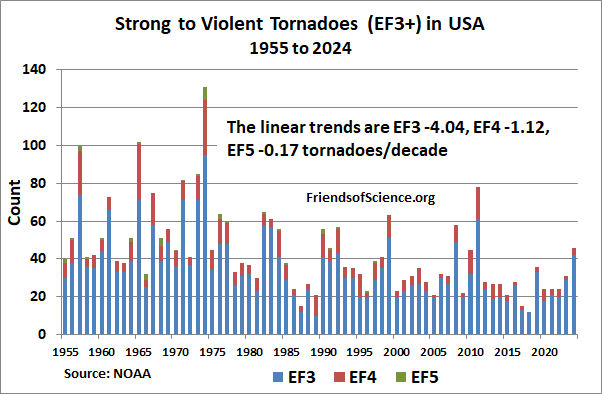

The sea level data shows no increase in the recent rate of sea level rise, and no such increase is expected over the next hundred years. There has been no detected increase in severe storms and there is no reason to expect an increase in the number or intensity of hurricanes resulting from any warming assumed to be from human caused CO2 emissions.

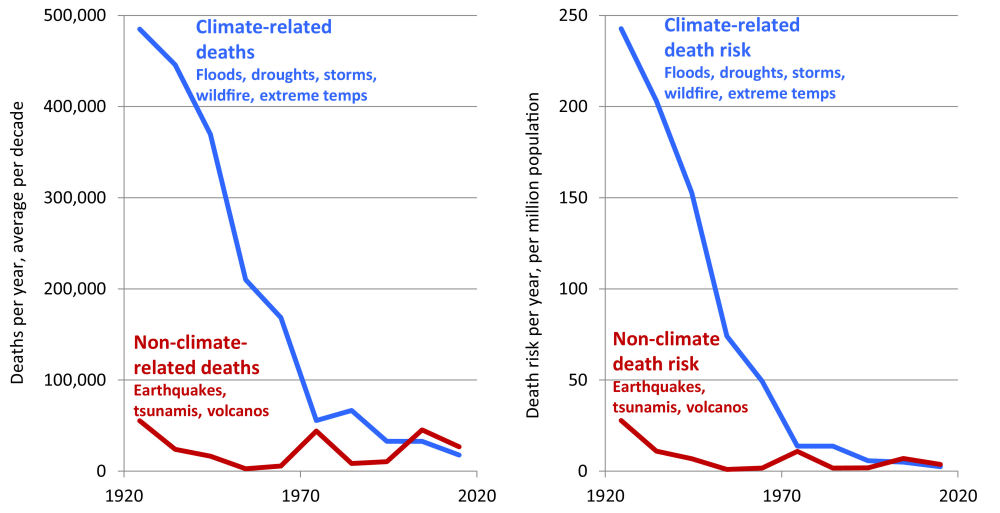

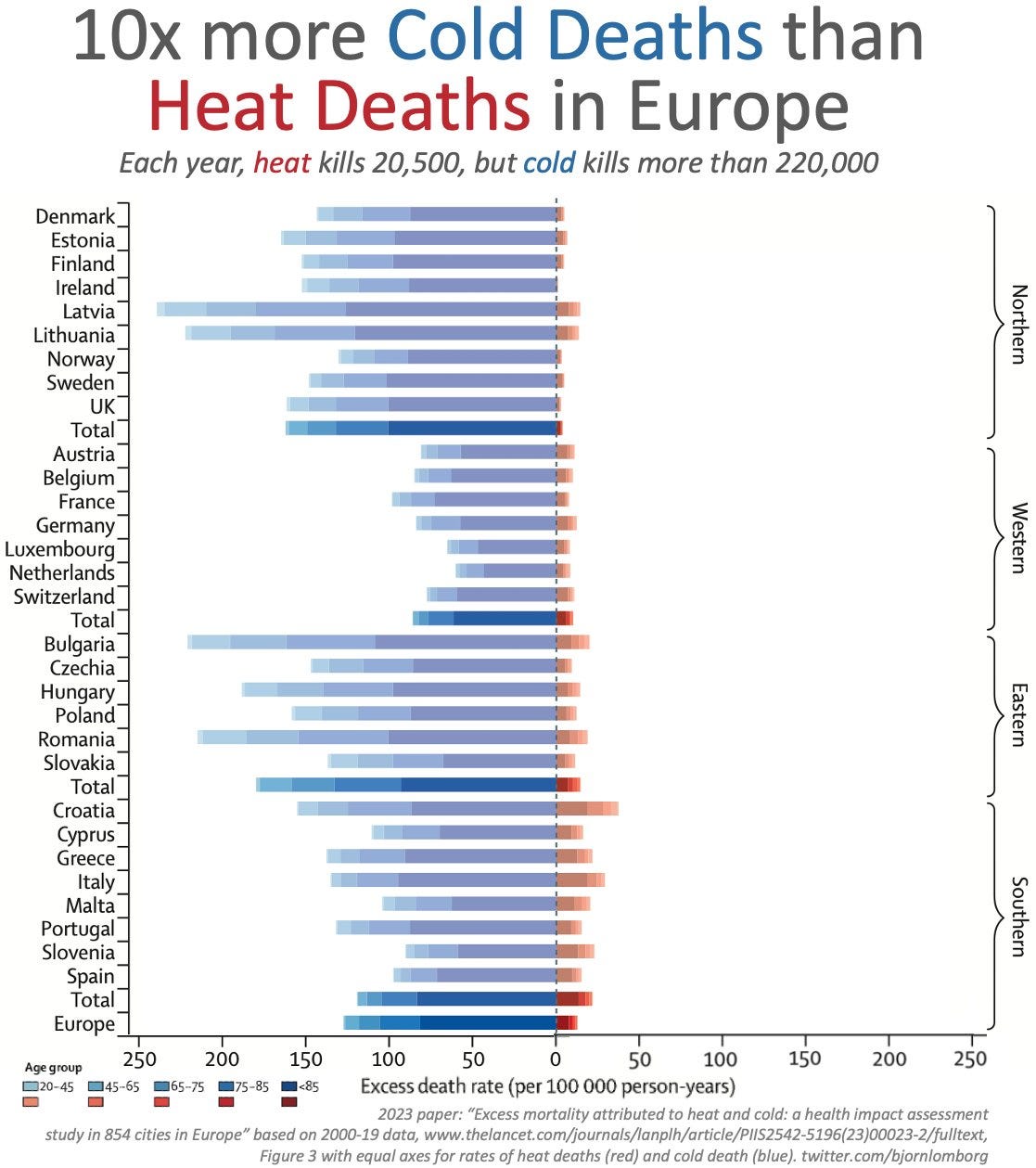

Any increase in temperatures due to human caused CO2 emissions will likely be beneficial to human health. The CO2 fertilization effect will increase the rate of forest growth and CO2 induced crop yield increases will reduce the pressures to cut down forests for farmland expansion. This will greatly benefit animals by slowing habitat destruction.

The benefits of CO2 emissions greatly exceed any likely harmful effects. Several authorities who have studied solar cycles have warned that the Earth may soon enter a cooling phase as the Sun is expected to become less active. The atmosphere may warm because of human activity, but if it does, the expected change is unlikely to be more than 0.8 °C, and probably less, in the next 100 years.

The Greenhouse Effect

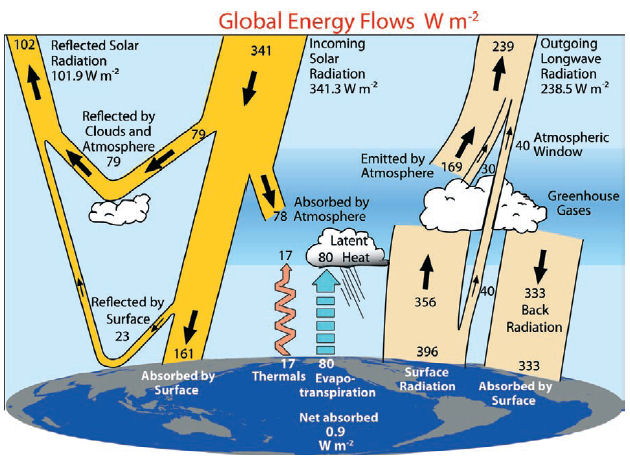

This graphic, from Trenberth et al 2009, illustrates the exchange of energy among Space, the Sun, the atmosphere and the Earth.

Greenhouse gases are primarily water vapour, carbon dioxide and ozone. Greenhouse gases are mostly transparent to incoming solar radiation, but absorb outgoing long wavelength radiation. The absorbed energy is then transferred to cooler molecules or radiated at longer wavelengths than the energy previously absorbed. This process makes the Earth warmer than it otherwise would be without the greenhouse gases (but with the atmosphere and clouds) by about 33 degrees Celsius.

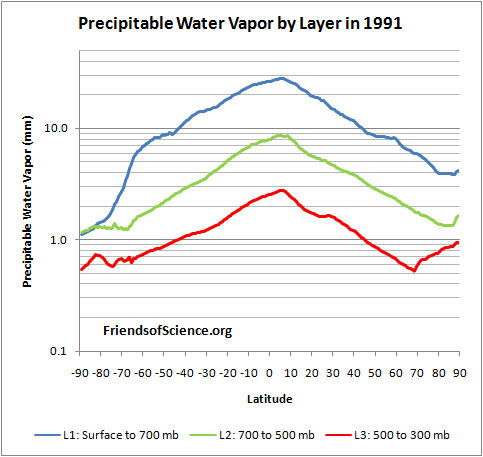

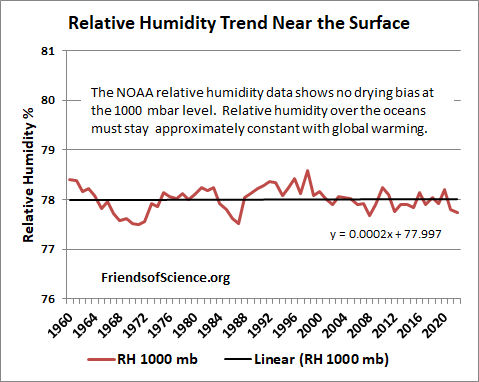

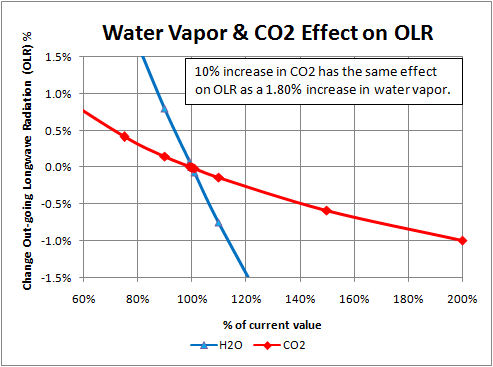

Water vapour and clouds together account for over 70% of the total current greenhouse effect. However, in terms of changes to the greenhouse effect due to human activities, water vapour is generally considered a feedback and not a forcing agent. Computer simulations show the a uniform 1.8% change in water vapour has the same effect on outgoing longwave radiation as a 10% change in CO2 concentration.

More greenhouse gases reduce the transparency of the atmosphere to longwave radiation from the surface.

The top panel of the graph above shows the absorption spectral intensity of the greenhouse gases. Most of the short wave length solar radiation in the visible part of the spectrum is transmitted to the surface. Most of the upward thermal long wave radiation from the surface is absorbed except in the atmospheric window indicated by the blue region. About 16% of the long wave radiation is transmitted directly to space and the rest is absorbed by greenhouse gases. The middle panel shows the total absorption bands by wavelength of downward solar radiation and upward thermal radiation. The gray shading at 100 percent indicates that the energy is fully absorbed at that wavelength. The lower panel shows the absorption of the major greenhouse gases. Comparing the CO2 and H2O absorption spectra shows that much of the CO2 spectrum overlaps with that of water. Parts of the CO2 spectrum are already fully saturated. Adding more CO2 will result in ever diminishing effects as more of the available wavelengths become saturated. The temperature response to adding CO2 to the atmosphere depends on the amount of positive and negative feedbacks from water vapour, clouds and other sources. The temperature effect of increasing CO2 concentration is approximately logarithmic. This means if doubling the CO2 concentration from 300 ppm to 600 ppm, a 300 ppm increase, causes the temperature to rise by 1 °C, it would take another 600 ppm increase to add a further 1 °C temperature gain. Methane has an absorption band (at 8 micrometres) that largely overlaps with water vapour, so an increase in methane has little effect on temperature.

The above diagram shows the upward radiation spectrum from the top of the atmosphere at 20 km with 300 ppm CO2 and 600 ppm CO2 as calculated by the MODTRAN radiative code. (Note that the horizontal axis of this diagram shows wavenumber, or number of wavelengths per cm, which is the reciprocal of the wavelength in micrometers used in the previous diagram.) This model calculated radiation is very similar to what is actually measured by satellites from space. The green curve shows the emissions spectrum with 300 ppm CO2 in the atmosphere and the blue curve shows the spectrum with 600 ppm CO2 with the same surface temperature and water vapour profile. The model shows that doubling the CO2 concentration changes the spectrum only at the edges of the main CO2 absorption band, at 600 and 740 cm-1. The resulting forcing of 3.39 W/m2 would cause the surface temperatures to increase if not offset by negative feedbacks.

CO2 Versus Water's Contribution

CO2, water vapour and clouds have the most significant contributions to the greenhouse effect. Various sources give conflicting estimates of the contributions of these components to the greenhouse effect. The infrared absorption spectrum of atmospheric greenhouse gases is very complex. In some regions absorption frequencies of various greenhouse gases overlap, so the contributions of each component do not add linearly. Radiation at a particular frequency can be absorbed by either water vapour or CO2. The concentration of water vapour is dependent on temperature and varies greatly by both latitude and altitude. Also, water changes from a liquid to a gas with heat energy for the latent heat of evaporation required for the transformation.

Most sources put the greenhouse effect at 33 °C. This is the difference between the current air surface temperature (15 °C) and temperature without the greenhouse effect of gases and clouds, but with the clouds continuing to reflect 31% on the incoming solar radiation.

Nature does not allocate the contribution of various greenhouse gases - only the total effect is meaningful. Nevertheless, a rough estimate of the contributions can be made. The relative contribution of water, clouds and CO2 to the greenhouse effect can be estimated in two ways; by estimating from radiation models the change to the greenhouse effect by removing one component, and by estimating the greenhouse effect of having only that one component in the atmosphere. If one removes the water vapour & clouds' greenhouse effect the remaining components would trap 34 percent of the heat, implying that water vapour & clouds would trap 66 percent as shown in the "Heat Not Trapped" column of the table below. The sum of the components calculated this way is only 80% of the greenhouse effect due to overlapping absorbing spectra. Similarly, if one includes only water vapour and clouds (no CO2, O3 or Other), they would trap 85% of the long wave radiation. However, the contributions of each component adds up to 126% of the greenhouse effect.

It is reasonable to just allocate the overlap proportionally to each component, so the effect is normalized in the "Relative Effect" columns so the sum of the effects equals 100%. This calculation suggests that water vapour & clouds contribute 70% to 80%, and CO2 contributes 10% to 20% of the greenhouse effect as shown in the table below:

| Component | Remove Component Heat Trapped | Heat Not Trapped | Relative Effect | Component Only Heat Trapped | Relative Effect | Average of Methods |

|---|---|---|---|---|---|---|

| None | 100 | 0 | ||||

| Water & Clouds | 34 | 66 | 82.5% | 85 | 67.5% | 75.0% |

| CO2 | 91 | 9 | 11.3% | 26 | 20.6% | 15.9% |

| O3 | 97 | 3 | 3.8% | 7 | 5.6% | 4.7% |

| Other | 98 | 2 | 2.5% | 8 | 6.3% | 4.4% |

| Total | 80 | 100.0% | 126 | 100.0% | 100.0% |

This gives a rough estimate of component contribution to the current total greenhouse effect, but this tells us almost nothing of the incremental effect of changing the concentration of a component.

Water vapour is the most important gas of the Greenhouse Effect. Water vapour is usually considered a feedback, while CO2 is considered a forcing because the residence time of a change in water vapour concentration is very short compared to CO2. Human caused water emissions (other than high altitude airplanes) do not have a significant effect on climate, but water can have a significant effect as a feedback on a temperature change initiated by the Sun or CO2 emissions.

If one magically removed 20% of all water vapour in the atmosphere, water will quickly evaporate from the oceans to replace it so that in 20 days the water concentration will be 99% of the original value as the graph below shows.

Likewise, if humans suddenly doubled our water emissions from the surface, in a few days the increased water vapour will rain out leaving the water vapour concentration almost unchanged. The above graph and absorption values were calculated using the Goddard Institute for Space Studies' General Circulation Model.

These calculations do not include the effects of airplanes. It is so cold at the elevation that airplanes fly that there is virtually no water vapour. The only time water gets that high is when high ground temperatures cause thermal uplift bringing water up with it. It is too cold up there for water to exist as vapour so droplets form and we see this as airplane vapour trails. These are artificial clouds of the type that traps infrared radiation but passes sunlight therefore creating a warming effect. Water vapour injected into the upper atmosphere has a much longer residence time than water injected into the atmosphere near the surface, so it may have a minor effect on climate.

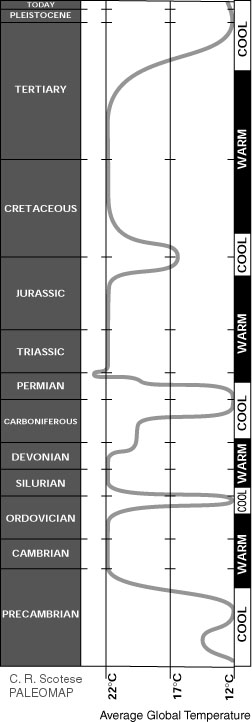

Climate Is Always Changing

The Earth's history shows that the climate has always been changing, over both short-term and long-term time scales. These changes have sometimes been abrupt and severe, without any help from humans. Climate temperature reconstructions are determined from a variety of sources, such as from tree ring width studies and ocean floor sediments. During the last 2 billion years, the Earth has alternated between cool periods like today, and warm periods like when the dinosaurs roamed the planet. The figure below on the left is a temperature reconstruction of the Earth over 2 billion years. Temperatures over this time frame are determined by mapping the distribution of ancient coals, desert deposits, tropical soils, salt and glacial deposits, as well as the distribution of plants and animals that are sensitive to climate, such as alligators, palm trees & mangrove swamps. See here for further information.

Temperature Over Geological Time

The chart above on the right is from here and it shows that CO2 levels have been declining since the end of the Jurassic period to the start of the industrial era. The change in CO2 as indicated by the red line in the red circle is the change in CO2 since the industrial revolution.

The graph below from here shows the estimated CO2 concentrations over the last 5.5 million years on several logarithmic time scales. It shows that CO2 levels 25 million years ago were higher than current levels.

The graph below shows five million years of climate change by combining measurements from 57 globally distributed deep sea sediment cores. The measured quantity is the oxygen 18 isotope fraction, which is a proxy for temperature.

The data is from Lisiecki and Raymo, 2005. The temperature scale is scaled was established by fitting the reported temperature variations at Vostok, Antarctica to the observed isotope variations, so the temperature scale is representative of Vostok changes.

The above graph from here shows 25,000 years of Greenland temperature history determined from the Greenland Ice Sheet Project Two (GISP2). After 5 years of drilling through the ice sheet into the bedrock to July 1993, an 3053 m ice core was recovered. By measuring the ratio of two isotopes of oxygen (specifically 18O to the much more common 16O) one can infer the air temperature at the time that the snow in each annual layer crystallized. This technique is considered quite accurate. Strong, abrupt warming is shown by nearly vertical rise of temperatures, strong cooling by nearly vertical drop of temperatures (Modified from Cuffy and Clow, 1997). Dr. Don Easterbrook writes, "Temperature changes recorded in the GISP2 ice core ... show that the global warming experienced during the past century pales into insignificance when compared to the magnitude of profound climate reversals over the past 25,000 years. In addition, small temperature changes of up to a degree or so, similar to those observed in the 20th century record, occur persistently throughout the ancient climate record. ... Over the past 25,000 years, at least three warming events were 20 to 24 times the magnitude of warming over the past century and four were 6 to 9 times the magnitude of warming over the past century."

Ice core results from the North Greenland Eemian Ice Drilling (NEEM) published in January 2013 for the warm period of the last interglacial from 128,000 to 122,000 years ago, known as the Eemian, was 8 +/- 4 °C warmer than the recent millennium. The 2540 m long ice core was drilled during 2008 to 2012. Full results presented in "Eemian interglacial reconstructed from a Greenland folded ice core".

Northern Hemisphere Temperature History

The graph above shows the northern hemisphere temperature history since the last ice age.

The graph above shows Greenland temperatures as determined by the GISP2 ice core. It is a detailed version of a previous graph above, from here.

Temperature History from North Atlantic Ocean Sediments

The graph above shows temperature variations of the past 3,000 years (during recorded history), as determined from ocean sediment studies in the North Atlantic. [Keigwin, 1996]. Note the rapid variations, as well as the much warmer temperatures 1,000 and 2,500 years ago.

A new temperature reconstruction with decadal resolution, covering the last two millennia, is shown below for the extratropical Northern Hemisphere (90-30 N), utilizing many palaeo-temperature proxy records, from Ljungqvist 2010 here. The shading represents 2 standard deviation errors.

| RWP = Roman Warm Period AD 1-300 | DACP = Dark Age Cold Period 300-900 |

| MWP = Medieval Warm Period 800-1300 | LIA = Little Ice Age 1300-1900 |

| CWP = Current Warm Period 1900-present |

The proxy data shows that parts of the Roman Warm Period and the Medieval Warm Period were as warm as the 1940s. Figure 3 of the Ljungqvist 2010 paper shows the HadCRUT3 northern exotropic 1990s decadal temperatures were about 0.15 °C higher than the peak of the MWP, however the proxy data did not record the second half 20th century temperature rise.

Climate is always changing, as the history of Europe's temperature over the last thousand years shows in the graph below.

1000 year Temperature History IPCC 1990

The temperature history shown above was published in the first IPCC report in 1990, based on Lamb's estimated climate history of Central England.

Clearly, human activity could not have had a significant effect on the temperature changes before 1900. These changes are the result of natural processes.

NASA's GISS temperature graphs since 1880 can be found here. The graph below shows the GISS global annual temperature history. The last year displayed is 2022

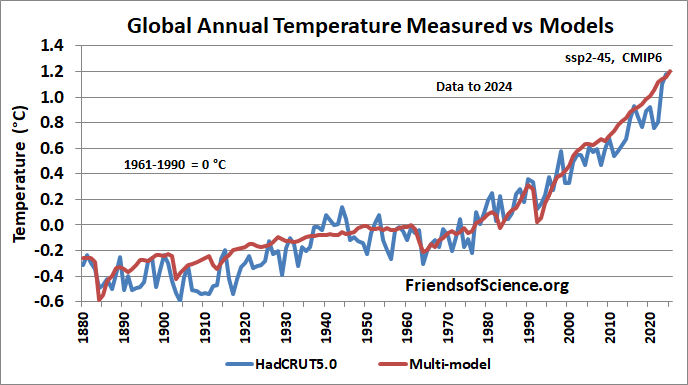

The graph below shows the HadCRUT5 annual temperatures from 1850 to 2022.

HadCrut5 is the global surface temperature index produced by the Hadley Centre and the Climate Research Unit, England. It combines land and marine temperature data. The graph above from here shows the annual northern hemisphere, southern hemisphere and global surface temperatures from 1850 to 2022.

The graph below compares the HadCRUT5 global annual temperatures to the mult-model mean of the CMIP6 used for the IPCC's AR6 report.The multi-model mean doesn't represent the multi-decadal oscillations well but otherwise matches the measured temperature fairly well to 2000. The modellers use high negative aerosol forcing to compensate for the models being too sensitive to greenhouse gases. The models are running too hot during the 21st century despite the measurements being too high due to contamination from urban warming. The 2023-2024 measured temperatures were quite high mostly due to the eruption of Hunga Tonga-Hunga Ha'apai (Hunga Tonga) volcano. Other factors that caused warming was a reduction in cloud cover that may be partly caused by reduce shipping aerosols, and an El Nino event,.

The Tonga-Hunga volcano eruption was a submarine explosion at a shallow depth of about 150 m below the sea surface. It ejected 150 million tons of water into the stratosphere..This paper used climate models to find that the dramatic increase of stratospheric water vapour "lead to strong and persistent warming of Northern Hemisphere landmasses in boreal winter." This event will likely cause elevated global temperatures for over a decade. International marine fuel regulations were changed in 2020 to reduce sulfate aerosols emissions from ships. Reduced aerosols may be a significant cause of the recent reduced cloud cover.

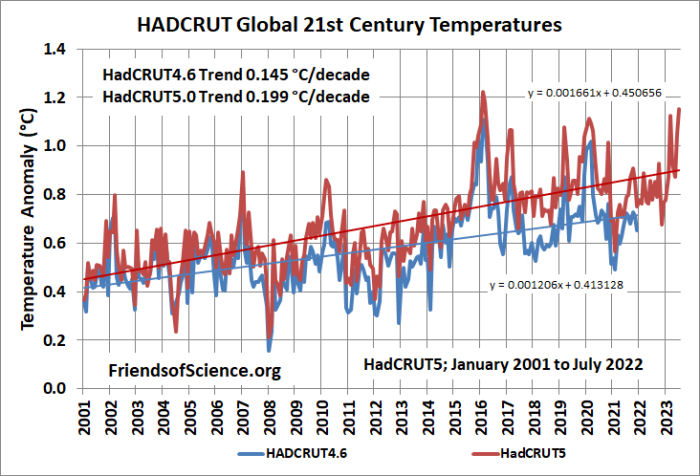

The HadCRUT3 dataset was discontinued in May 2014. The HadCRUT4 dataset was introduced to add more coverage in the northern polar region. The HadCRUT5 data set is an infilled, statistical analysis that extends coverage in data sparse regions. The graph below shows a comparison on the HadCRUT3, versions HadCRUT4.0 and 4.2 to 4.6 and HadCRUT5.0 datasets.

The graph below shows the global monthly 21st century temperatures from the HadCRUT4.6 and HadCRUT5.0 datasets, with the best-fit linear trends. The HadCRUT4.6 version ends at December 2021. THe HadCRUT5.0 version has a higher slope because it infills data where there are no measurements.

There has been a lot of attention of sea ice area because AGW is predicted to warm polar regions much more than other areas. The graph below shows the global sea ice extent by month and annually from satellite data found here. Sea ice extent is defined as the area within each pixel of satellite data that contains at least 15% sea ice.

The global sea ice extent has been variable with the lowest annual average extent in 2023. The linear trend is -0.55 million km2/decade. See here for graphs of Arctic and Antarctic sea ice extent.

Temperature Leads CO2 Changes

The temperature of the Earth has warmed slightly, about 0.8 degrees Celsius, over the 20th century. Over this time, CO2 concentration in the atmosphere has increased, mostly due to the increased use of fossil fuels. However, the Sun has increased in intensity since 1900 which may have induced much of the observed warming since then. Scafetta and West estimate that the Sun may have caused 10 to 20% of the increase in CO2 during the last century. (See [7] in their paper.) A short-term correlation does not imply that the CO2 increase caused the temperature increase. Causation can be inferred if there is a correlation over several cycles of CO2 concentration changes, with the CO2 change preceding the temperature change. The actual climate history shows no such correlation, and there is no compelling evidence that the recent rise in temperature was caused by CO2. Temperatures have been variable over time, and do not correlate to CO2 concentration. When CO2 concentrations were 10 times higher than they are now we were in a major ice age. As a greenhouse gas, CO2 is vastly outweighed by (natural) water vapour and clouds, which accounts for over 70% of the greenhouse effect. Human-related CO2 emissions soared after 1940. Yet most of the 20th century's world-wide temperature increase occurred beforehand. See here for a graphic of the carbon cycle.

The CO2 concentration with the lower troposphere temperatures from UAH are shown below. The CO2 concentration year average has increased from 376.8 parts per million (ppm) in 1979 to 421.1 ppm in 2023.

The actual increase of CO2 concentration averaged 0.5% per year since 1990 and is currently about 0.6%/year.

Fischer et al. (1999) examined records of atmospheric CO2 and air temperature derived from Antarctic Vostok ice cores that extended back in time across a quarter of a million years. Over this immense time span, the three most dramatic warming events experienced on earth were those associated with the terminations of the last three ice ages; and for each and every one of these tremendous global warmings, Earth's air temperature rose well before there was any increase in atmospheric CO2. In fact, the air's CO2 content did not begin to rise until 400 to 1,000 years after the planet began to warm. Ice cores provide a detailed record of local temperature and CO2 concentrations. A study by Caillon et al. (2003) finds that the CO2 increase lagged Antarctic deglacial warming by 800 +or- 200 years. The authors measured the isotopic composition of argon40 and CO2 concentration in air bubbles in the Vostok core during the end of the third most recent ice age (Termination III), 240,000 years before the present. The argon40 isotope is found to be an excellent proxy for temperature.

Vostok Ice Core Data over End of Third Ice Age BP

CO2 and Argon (Temperature) Age Scales are Shifted 800 years

The CO2 concentration shown by the black line is plotted against age in years before present (BP) on the bottom axis, and the Argon40, a temperature proxy, shown by the grey line is plotted against age on the top axis. The age scale for the CO2 has been shifted by a constant 800 years to obtain the best correlation of the two data sets. The correlation shows that temperature changes precede CO2 concentration changes by about 800 years.

These findings confirm that an increase in CO2 has never initially caused an increase in temperature during a deglaciation. Temperature increases cause the oceans to expel CO2 because CO2 is more soluble in cold water, increasing the CO2 content of the atmosphere. When temperature is at its maximum in each cycle and starts to fall, CO2 concentrations continue to increase for another 800 years! As CO2 increases, temperatures fall. This is the opposite of what one would expect if CO2 were a primary climate driver. The ice core data proves that CO2 is not a primary climate driver. One must invoke reverse time causality to claim the ice core data shows CO2 causes temperature change, like suggesting actions taken today can affect the conquests of Mongol leader Genghis Khan. Logic demands that cause must precede effect. Increases in air temperature drive increases in atmospheric CO2 concentration.

A more recent portion of the Vostok ice core record from Joanne Nova's Skeptics Handbook #1, found here, is shown below.

See CO2Science for more information. See here for the Cailion et al (2003) paper. A graph of the Vostok ice core data over 420,000 years is shown below. A large version is here.

Sun Activity Correlates With Temperature

Numerous papers published in major peer-reviewed scientific journals shows the Sun is the primary driver of climate change. There is a very strong correlation between the Sun activity and temperature.

Early in the nineteenth century, William Herschel (1738-1822), discoverer of Uranus, found that five periods of low number of sunspots corresponded to high wheat prices when the temperatures were cold. (Cold climate reduces the supply of wheat causing its price to rise.) See "The Varying Sun & Climate Change", Soon & Baliunas, 2003.

E. Friis-Christensen and K.Lassen have shown that the length of the mean 11 year Sunspot cycle correlates to the northern hemisphere temperature during the past 130 years. The length of the Sunspot cycle is known to vary with solar activity, whereas high solar activity implies short sunspot cycle length. See here for further information.

See here for an updated plot based on Friis-Christensen and Lassen's methodology.

Here is a correlation of the sunspot cycle length, global temperature and CO2 concentrations.

Sunspot Cycle Length Temperature and CO2

The red squares on the graph represent the sunspot cycle lengths. One point is the cycle length from the time of the maximum number of sunspots to the time of the maximum number of sunspots of the next cycle, and the following point is the cycle length from the time of the minimum number of sunspots to the time of the minimum number of sunspots of the next cycle. The sunspot cycles are back filtered using weighting 1,2,3,4 applied to each cycle point, both min to min and max to max. This assumes that the current cycle has the most effect on temperature (weight 4), and previous half cycles affect current temperatures in declining amounts, but future cycles have no effect on the current temperature. The temperature curve in blue used the HadCRUT3 land and sea data to 1978, the MSU satellite data from 1984 to 2006, and the average of the datasets for 1979 to 1983. This eliminates much of the urban heat island effects. The temperatures are unfiltered annual. The CO2 concentrations (ppmv) from 1958 to 2007 are derived from air samples collected at the Mauna Loa Observatory, Hawaii. CO2 concentrations prior to 1958 are uncertain.

Note that there is a correspondence between sunspot cycle length and temperature. Both the temperature and the cycle length curves begin to rise at 1910, and temperatures fall after 1945 to 1975 when the cycle length curve falls, and both curves rise again after 1975. Temperatures have been increasing since 1980 faster than can be explained by the sunspot cycle length, indicating a possible human CO2 contribution. The recent increase of the cycle lengths explains why there has been no warming since 2002. Temperature changes are expected to follow Sun activity changes due to a time lag resulting from the large heat capacity of the oceans.

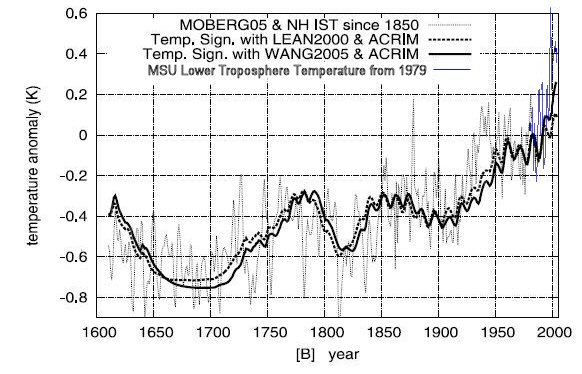

N. Scafetta of Duke University, Durham, NC and B.J. West of the US Army Research Office, NC studied the solar impact on 400 years of the Northern Hemisphere temperatures since 1600. They find good correspondence between temperature and solar irradiance proxy reconstructions up until 1920 as shown on the graph below.

Northern Hemisphere Temperature vs Solar Irradiance 400 years

The temperature curve is derived from proxy records to 1850 by Moberg et al. [2005], and from instrumental surface temperature data from 1850 to about 1980. The surface temperature record includes the urban heat island (UHI) and land use changes effects. The Northern Hemisphere MSU lower troposphere record is shown from 1979 in blue, which eliminates most of the UHI effects. Two different solar irradiance proxy reconstructions are shown: Lean, 2000; Wang et al., 2005. Both curves merge the ACRIM satellite data since 1980 with the proxy data. By assuming ACRIM, the solar activity has an increasing trend during the second half of the 20th century. This graph is modified from the version created by Scafetta and West, which uses the contaminated instrument record after 1979 instead of the satellite data. See the original version here.

Note the low solar activity periods occurring during the Maunder Minimum (1645 to 1715, the Little Ice Age) and during the Dalton Minimum (1795 to 1825).

Note the excellent correlation from 1600 to 1900 when humans were unlikely to effect climate. During the 20th century one continues to observe a significant correlation between the solar and temperature patterns: both records show an increase from 1900 to 1950, a decrease from 1950 to 1970, and again an increase from 1970 to 2000.

A divergence of the curves from the Scafetta and West original graph indicates that the Sun is responsible for 56% using Lean 2000, and 69% using Wang 2005, of the northern hemisphere warming from 1900 to 2005. The authors estimate the error at 20%.

There are two solar composites available from satellite data. The ACRIM is obtained directly from high precision satellite data.

There is a gap (1989 - 1992) in the satellite record that was due to a delay in launching a new ACRIM satellite after the 1986 space shuttle challenger disaster. The delay caused a gap of two years in the ACRIM system that measures solar irradiance. The only data available to fill the gap was from a different monitor called the earth radiation budget (ERB) system which wasn’t designed to monitor the Sun. It had little precision and only had a view of the sun during brief intervals of its orbit. The ACRIM record suggested an increase in solar irradiance from the early 1980s through to the end of the 1990s. A rival group called PMOD claimed that the ERB sensors experienced an increase in its sensitivity over the gap period, so they adjusted downward the second ACRIM satellite data to show a decline of solar intensity. Dr. Douglas Hoyt, the scientist who had been in charge of the ERB satellite mission, said “there is no known physical change in the electrically calibrated [system] that could have caused it to become more sensitive. And no one has ever come up with a physical theory for the instrument that could cause it to become more sensitive. The IPCC reports have downplayed the role of solar activity in recent climate change by using only the PMOD solar irradiance interpretation.

The authors did a similar analysis using the Mann and Jones 2003 temperature reconstruction. This temperature history shows little variation before 1900 and shows a hockey stick shape. This reconstruction has been severely criticized for several reasons. See The IPCC Hockey Stick section of this essay. The authors found that the Mann and Jones 2003 reconstruction (when compared to the Lean 2000 data) results in an unphysical zero response time to solar forcing. The ocean's large heat capacity should result in a time lag of surface temperatures with respect to long time solar changes of several years, so this reconstruction cannot be correct.

The authors' analysis shows the Sun has contributed 50 to 69% of the surface warming depending on the reconstructions utilized. The remainder may be due to CO2, UHI and land use changes. The authors compare the Sun's irradiance to the Northern Hemisphere land surface temperatures, which are contaminated with the urban heat island effect. The global MSU satellite temperatures, which are not contaminated by the UHI effect, have increased by half as much as the North Hemisphere temperatures since 1980. If the Scafetta and West analysis used the uncontaminated satellite data since 1980, the results would show that the Sun has contributed at least 75% of the global warming of the last century. See more about the UHI effect later in this essay. See here for the November 2007 article.

Climate alarmists claimed that solar activity couldn’t possibly have anything to do with the warming of the late 20th century because sunspot numbers peaked about 1960 then decline while global temperatures rose over the 2nd half of the 20th century. The solar activity curve, which was updated in 2015, shows total solar irradiance peaked in 1990 with solar cycle 22. Solar activity isn’t just sunspot numbers. Lüning and Vahrenholt write “The sun not only reached its maximum at the end of the 20th century, but was apparently stronger than at any time over the past 10,000 years." The graph below shows sunspot numbers and total solar irradiance (TSI), source.

A group of NASA and university scientists have found convincing evidence of a link between the Sun activity and climate by comparing the records of the historical water level of the Nile River to the number of auroras observed in northern Europe and the Far East between 622 and 1470 AD. Auroras are bright glows in the night sky following solar flares, and are an excellent means of tracking solar activity. See this link for further information.

A study by WJR Alexander et al, published June 2007 compared hydrometeorological data to solar variability. The study looked at rainfall, river flow and flood data. The authors conclude that there is "an unequivocal synchronous linkage between these processes in South Africa and elsewhere, and solar activity." The study included an analysis of the level of Lake Victoria, which has been carefully monitored since 1896. In the early 1960s a dramatic rainfall increase significantly raised the lake level, and the level since then has been falling at about 29 mm per year. The decline has been removed from the data plotted below. The plot shows two periods of strong correlation between lake level and sunspot number, corresponding to periods of high levels of volcanic dust.

Lake Victoria Water Level and Sunspot Number

See the paper "Linkages between solar activity, climate predictability and water resource development" here.

Longer term, here is a correlation of a solar proxy to a temperature proxy for a period of 3000 years. Values of carbon-14 (produced by cosmic rays hence a proxy for solar activity) correlate extremely well with oxygen-18 (temperature proxy). The lower graph shows a particularly well-resolved time interval from 8,350 to 7,900 years BP.

The above graph summarizes data obtained from a stalagmite from a cave in Oman, as reported in the paper, Neff, U., et al. 2001.

A team of researchers led by scientists from the Max Planck Institute for Solar System Research analysed radioactive isotopes in trees and has found that the Sun has been more active in the last half of the 20th century than in any time in the last 8000 years. This study showed that the current episode of high solar activity since about the year 1940 is unique within the last 8000 years. See a press release here. A graph from the study is below. The bottom chart is a detail of the shaded period of the top chart from 9300 to 8600 years before the present.

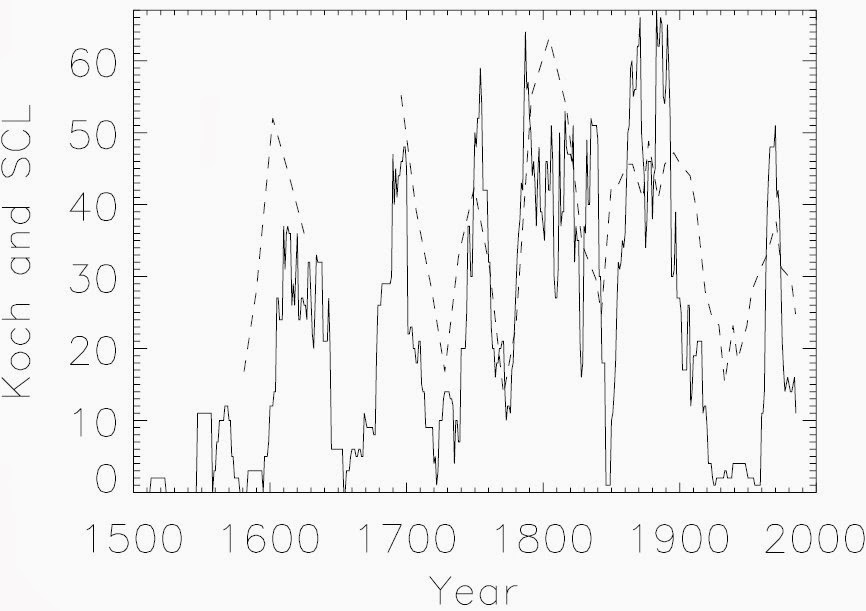

A study published by the Danish Meteorological Institute compares the Koch ice index which describes the amount of ice sighted from Iceland, in the period 1150 to 1983 AD, to the solar cycle length, which is a measure of solar activity. The study finds "A close correlation (R=0.67) of high significance (0.5 % probability of a chance occurrence) is found between the two patterns, suggesting a link from solar activity to the Arctic Ocean climate."

Tim Patterson, an adviser to the FoSS, has studied high-resolution Holocene climate records from fjords and coastal lakes in British Columbia and demonstrates a link between temperature and solar cycles.

The spectral analysis shown here is from sediment cores obtained from Effingham Inlet, Vancouver Island, British Columbia. The annually deposited laminations of the core are linked to the changing climate conditions. The analysis shows a strong correlation to the 11-year sunspot cycle.

See here for a powerpoint slide show by Tim Patterson.

A paper by Soon et al 2015 titled "Re-evaluating the role of solar variability on Northern Hemisphere temperature trends since the 19th century" in section 5 compares an updated Hoyt & Schatten total solar irradiance reconstruction to Arctic and Northern Hemisphere temperature conposites that are based on mostly rural temperature data to remove the effects of urban development. The general agreement between the temperature and solar activity trends is striking. The graph below shows a correlation of R2= 0.48, implying that solar variability has been the dominant influence on Northern Hemisphere temperature trends since at least 1881.

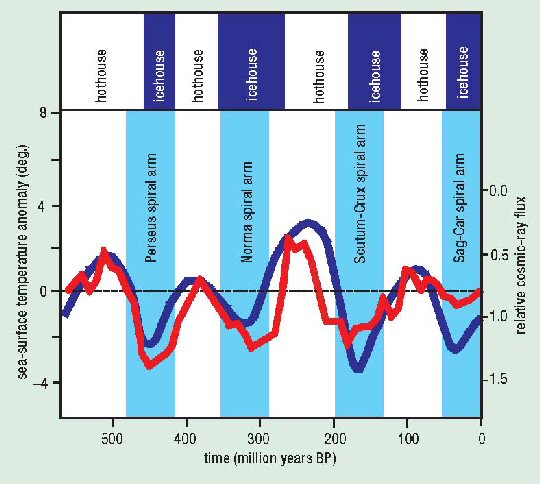

N. Shaviv and J. Veiser using seashell thermometers shows a strong correlation between temperature and the cosmic ray flux over the last 520 million years.

Cosmic Ray Flux and Tropical Temperature Variation Over the Phanerozoic 520 million years

The upper curves describe the cosmic ray flux (CRF) using iron meteorite exposure age data. The blue line depicts the nominal CRF, while the yellow shading delineates the allowed error range. The two dashed curves are additional CRF reconstructions that fit within the acceptable range. The red curve describes the nominal CRF reconstruction after its period was fine-tuned to best fit the low-latitude temperature anomaly. The bottom black curve depicts the smoothed temperature change derived from calcitic shells over the Phanerozoic. The red line is the predicted temperature model for the red curve above. The green line is the residual. The top blue bars indicate ice ages.

A paper by Nicola Scafetta, May 2012, titled "A shared frequency set between the historical mid-latitude aurora records and the global surface temperature" compares the historical records of mid-latitude auroras from 1700 to the surface temperature records. It shows that auroras record share the same oscillation frequencies evident in the temperature record and in several planetary and solar records. The author argues that the aurora records reveal a physical link between climate change and astronomical oscillations. The abstract states:

"In particular, a quasi-60-year large cycle is quite evident since 1650 in all climate and astronomical records herein studied ... The existence of a natural 60-year cyclical modulation of the global surface temperature induced by astronomical mechanisms, by alone, would imply that at least 60 to 70% of the warming observed since 1970 has been naturally induced. Moreover, the climate may stay approximately stable during the next decades because the 60-year cycle has entered in its cooling phase."

More analysis is presented by Scarfetta in his 2011 presentation "Heliospheric oscillations and their implication for climate oscillations and climate forecast" at the 3rd Santa Fe Conference on Global and Regional Climate Change.

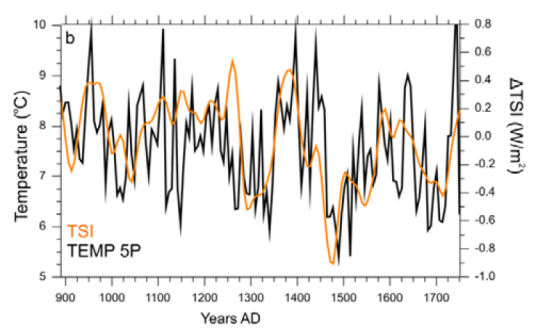

A paper published by Moffa-Sánchez et al in Nature Geoscience, March 2014, titled "Solar Forcing of North Atlantic Surface Temperature and Salinity Over the Past Millennium" found that solar activity correlates well with North Atlantic temperatures. The abstract states:

"There were several centennial-scale fluctuations in the climate and oceanography of the North Atlantic region over the past 1,000 years, including a period of relative cooling from about AD 1450 to 1850 known as the Little Ice Age. These variations may be linked to changes in solar irradiance, amplified through feedbacks including the Atlantic meridional overturning circulation. ... low solar irradiance promotes the development of frequent and persistent atmospheric blocking events, in which a quasi-stationary high-pressure system in the eastern North Atlantic modifies the flow of the westerly winds. We conclude that this process could have contributed to the consecutive cold winters documented in Europe during the Little Ice Age."

The graph below, adapted from figure 2, presents the three-point smoothed RAPiD-17-5P temperature record in black. Overlain is the total solar irradiance (ΔTSI) that has been shifted with a 12.4 year lag. This clearly shows a high correlation between temperature and TSI.

Further review of Moffa-Sánchez's work is provided at THE HOCKEY SCHTICK.

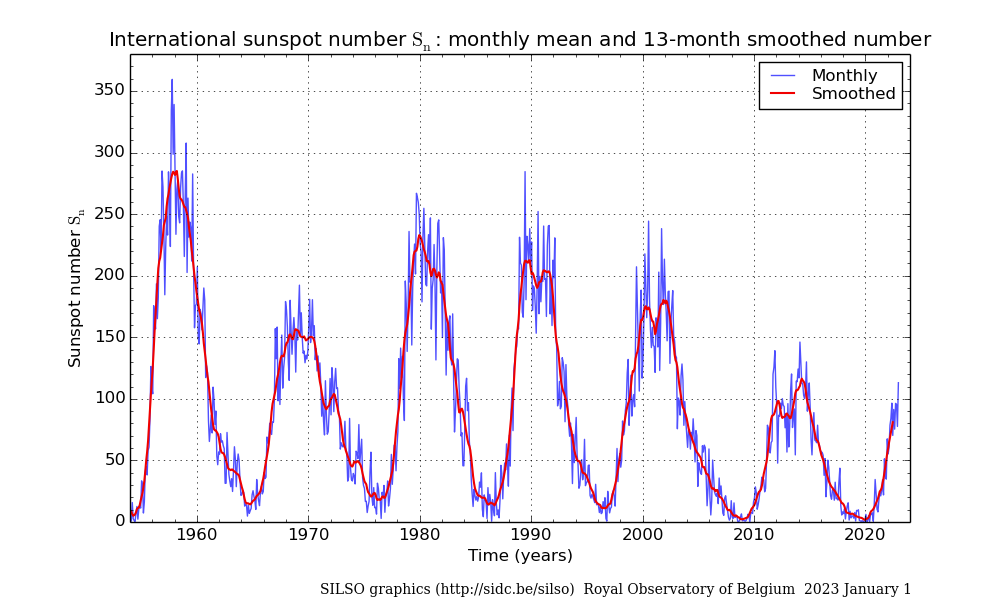

The Sunspot Index and Long-term Solar Observations (SILSO) Sunspot number graph showing six cycles is shown below. The data is from the Royal Observatory of Brussels. The Sunspot Cycle 24 has a smoothed sunspot number maximum of about 115 in 2014.

The monthly mean sunspot numbers and the centered 13-month average is shown. The data is here. The NOAA solar cycle image below is from here.

A new model of the sun has produce unprecedentedly accurate predictions of the sun's variable solar cycles. The model uses two solar dynamos, one near the solar surface and one in the deeper in the convection zone. The model was described in a paper by Shepherd et al 2014 here and described here. The model predicts that solar activity will fall from cycle 24 activity by 60 per cent during the 2030s to conditions last seen during the 'mini ice age' that began in 1645.

Sun And Cosmic Rays

During the 20th century the Sun has continued to warm and may have contributed directly to a third of the warming over the last hundred years. The change in solar output is too small to directly account for most of the observed warming. However, the Sun-Cosmic Ray connection provides an amplification mechanism by which a small change in solar irradiance will have a large effect on climate.

A paper by H. Svensmark and E. Friis-Christensen of the Center for Sun-Climate Research of the Danish National Space Center in Copenhagen has shown that cosmic rays highly correlate to low cloud formation. Changes in the intensity of galactic cosmic rays alter the Earth's cloudiness.

An experiment in 2005 shows the effect of cosmic rays in a reaction chamber containing air and trace chemicals found over the oceans. Electrons released in the air by cosmic rays act as a catalyst in making aerosols. They significantly accelerate the formation of stable, ultra-small clusters of sulphuric acid and water molecules, which are the building block for the cloud condensation nuclei.

Danish scientists reported in May 2011 that they have succeeded for the first time in directly observing that the electrically charged particles coming from space and hitting the atmosphere at high speed contribute to creating the aerosols that are the prerequisites for cloud formation. In a climate chamber at Aarhus University, scientists have created conditions similar to the atmosphere at the height where low clouds are formed. This artificial atmosphere was irradiated with fast electrons from ASTRID Denmarks largest particle accelerator. The experiments show that increased radiation from cosmic rays leads to more aerosols. In the atmosphere, these aerosols grow into actual cloud nuclei in the course of hours or days. Water vapour concentrates on the nuclei forming small cloud droplets. See the paper here.

A team of 63 scientists published results in August 2011 of a much more sophisticated experiment which investigated the effects of cosmic rays on cloud formation. The CLOUD (Cosmics Leaving OUtdoor Droplets) experiment at CERN (European Organization for Nuclear Research) in Geneva show big effects of pions from an accelerator, which simulate the cosmic rays and ionize the air in the experimental chamber. The CLOUD experiment is the most rigorous test of the Cosmic Ray hypothesis yet devised. The experiments show that cosmic rays strongly enhance the formation rate of aerosols by up to ten fold, and confirms the earlier results from the Danish experiment. The aerosols may grow into cloud condensation nuclei on which cloud droplets form. See the CERN press release here.

The graph below shows the aerosol particle concentration growth in the CLOUD chamber. In an early-morning experimental run at CERN, starting at 03:45, ultraviolet light began making sulphuric acid molecules in the chamber, while a strong electric field cleansed the air of ions. As soon as the electric field was switched off at 04:33, natural cosmic rays raining down through the roof helped to build clusters at a higher rate. When CLOUD simulated stronger cosmic rays with a beam of charged pion particles starting at 4:58 the rate of cluster production became faster still. The various colours are for clusters of different diameters (in nanometres) as recorded by various instruments. The largest (black) took longer to grow than the smallest (blue). The CLOUD results also show that trace vapours assumed until now to account for aerosol formation in the lower atmosphere can explain only a tiny fraction of the observed atmospheric aerosol production.

Coronal mass ejections from the sun cause a large decrease in the cosmic ray count, which are called Forbush decrease. These dramatic, short term cosmic ray decreases can be used to confirm the cosmic ray effects on clouds. The magnetic plasma clouds from solar coronal mass ejections provide a temporary shield against galactic cosmic rays.

A study by Svensmark et al in 2009 shows that the decrease in cosmic rays have a large effect on the amount of aerosols, cloud cover and the liquid water content of clouds. The authors conclude "From solar activity to cosmic ray ionization to aerosols and liquid-water clouds, a causal chain appears to operate on a global scale."

The figure below shows the evolution of fine aerosols particles in the lower atmosphere (AERONET), cloud water content (SSM/I), liquid water cloud fraction (MODIS), and low IR-detected clouds (ISCCP), averaged for the 5 strongest Forbush decreases in the period 1987-2007. The red dashed line shows the average cosmic ray count percent change. The lowest aerosol count occurs 5 days after the Forbush minimum, and the cloud water content minimum occurs 4 days later. The response in cloud water content for the larger events is about 7%.

The broken horizontal lines denote the mean for the first 15 days before the Forbush minimum of each of the four data sets.

Source.

Data from the International Satellite Cloud Climatology Project and the Huancayo cosmic ray station shows a remarkable correlation between low clouds (below 3 km) and cosmic rays. There are more than enough cosmic rays at high altitudes, so changes in the cosmic rays do not effect high clouds. But fewer cosmic rays penetrate to the lower clouds, so they are sensitive to changes in cosmic rays.

Cosmic Rays and Low Clouds

The blue line shows variations in global cloud cover collated by the International Satellite Cloud Climatology Project. The red line is the record of monthly variations in cosmic-ray counts at the Huancayo station.

Low-level clouds cover more than a quarter of the Earth's surface and exert a strong cooling effect on the surface. A 2% change in low clouds during a solar cycle will change the heat input to the Earth's surface by 1.2 watts per square metre (W/m2). This compares to the total warming of 1.4 W/m2 the IPCC cites in the 20th century. (The IPCC does not recognize the effect of the Sun and Cosmic rays, and attributes the warming to CO2.)

Cosmic ray flux can be determined from radioactive isotopes such as beryllium-10, or the Sun's open coronal magnetic field. The two independent cosmic ray proxies confirm that there has been a dramatic reduction in the cosmic ray flux during the 20th century as the Sun has gained intensity and the Sun's coronal magnetic field has doubled in strength.

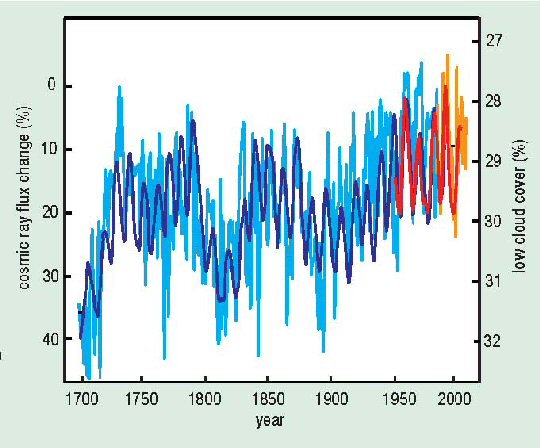

Cosmic Ray Flux Since 1700

Changes in the flux of galactic cosmic rays since 1700 are here derived from two independent proxies, 10Be (light blue) and open solar coronal flux (dark blue) (Solanki and Fligge 1999). Low cloud amount (orange) is scaled and normalized to observational cosmic-ray data from Climax (red) for the period 1953 to 2005 (3 GeV cut-off). Both scales are inverted to correspond with rising temperatures. Note that high cosmic ray flux around 1700 is at the end of the Little Ice Age. Also note the increase in cosmic ray flux after 1780 at the time of the Dicken's Winters.

The graph below shows a correlation between the cosmic ray counts and the global troposphere temperature radiosonde data. The cosmic ray scale is inverted to correspond to increasing temperatures. High solar activity corresponds to low cosmic ray counts, reduced low cloud cover, and higher temperatures. The upper panel shows the troposphere temperatures in blue and the cosmic ray count in red. The lower panel shows the match achieved by removing El Nino, the North Atlantic Oscillation, volcanic aerosols and a linear trend of 0.14 degrees Celsius/decade.

The negative correlation between cosmic ray counts and troposphere temperatures is very strong, indicating that the Sun is the primary climate driver. H. Svensmark and E. Friis-Christensen published the above graph in a paper October 2007 in response to a paper by M. Lockwood and C. Frohlich, in which they argue that the historical link between the Sun and climate came to an end about 20 years ago. However, the Lockwood paper had several deficiencies, including the problem that they used surface temperature data that is contaminated by the urban heat island effect (see below). They also fail to account for the large time lag between long-term solar intensity changes to the climate temperature response.

See Svensmark's rebuttal and Gregory's critique of the Lockwood paper.

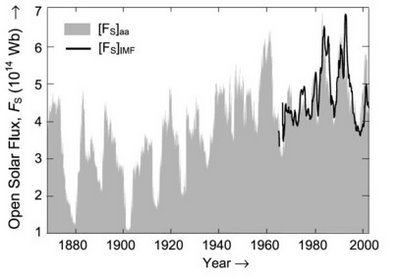

Over the 20th century the Sun has increased activity and irradiance intensity, directly providing some warming. The graph below from here shows the rising solar flux during most of the twentieth century.

Open Solar Flux

Dr. U.R. Rao of Bangalore, India, shows that galactic cosmic rays, using 10Be measurements in deep polar ice as the proxy, has decreased by 9% during the last 150 years. The decrease in cosmic rays cause a 2.0% decrease in low cloud cover resulting in a radiative forcing of 1.1 W/m2, which is about 60% of that due to the CO2 increase during the same period.

In the top panel showing cosmic ray intensity, the continuous line represents estimated Climax neutron monitor counting rate (1956-2000), open circles denote ionization chamber measurements during (1933-1956) and filled circles represent cosmic ray intensity derived from 10Be (1801-1932). 10Be is a long-lived radioactive beryllium isotope produced by cosmic rays. The middle panel shows the near-Earth helio-magnetic field and the lower panel shows the sunspot number.

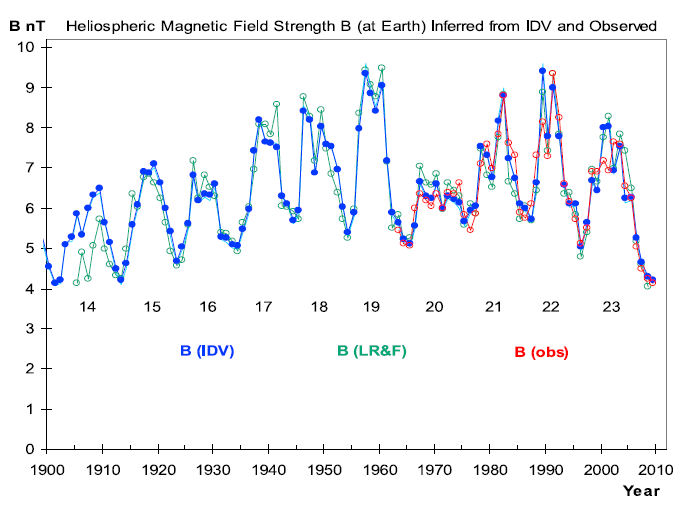

A reconstruction of the near Earth heliospheric magnetic field strength from 1900 through 2009 from here by Svalgaard and Cliver (2010) is shown below.

The red curve are satellite direct measurements of the near-Earth heliospheric magnetic field (HMF) strength resulting from the solar wind. The blue curve is the Inter-Diurnal Variability (IDV) index calculated from the geomagnetic field observations one hour after midnight. The IDV is highly correlated with the near-Earth HMF. The green values are estimates of HMF by Lockwood et al 2009.

When the Sun is active it has a higher number of sun spots and emits more solar wind - a continuous stream of very high-speed charged particles. The increased solar wind and magnetic field repels cosmic rays that otherwise would hit the Earth's atmosphere, resulting in less aerosols in the lower atmosphere thereby reducing low cloud formation. The low clouds have a high reflectivity and have a strong cooling effect by reflecting sunlight back into space.

In summary, the process is:

More active Sun → more sunspots → more solar wind → less cosmic ray → less aerosols →

↪ less low clouds → more sun light to the surface → more global warming.

The theory of CO2 warming implies that the arctic and Antarctica should be warming about the same, and the polar regions should be warming more that the rest of the Earth. However, Antarctica has not warmed since 1975, which is a big problem for the CO2 theory. The ice covering Antarctica has even higher reflectivity than low clouds, so fewer low clouds cools Antarctica, while fewer low clouds warms the rest of the planet. (Greenland's ice sheet is much smaller and is not so reflective.) This Antarctica temperature trend is strong evidence that the Sun, not CO2, is the primary climate driver.

Antarctica and North America Temperature Trends

The top curve is the North American surface temperature and the bottom curve is the Antarctica (64 S - 90 S) surface temperature over the past 100 years. The Antarctic data have been averaged over 12 years to minimize the temperature fluctuations. The blue and red lines are fourth-order polynomial fits to the data. The curves are offset by 1 K for clarity; otherwise they would cross and re-cross three times.

The cosmic ray flux is not only influenced by the solar wind, it also varies with the position of the solar system in the galactic arms. The solar system passes through the arms of the Milky Way galaxy roughly every 140 million years. When the solar system is in the galactic arms the intensity of cosmic rays increases, as we are closer to more supernovas that give off powerful bursts of cosmic rays. The variations of the cosmic ray flux due to the solar system passing through four arms of the Milky Way galaxy during the last 550 million years is ten times greater than that caused by the Sun. The correlation between cosmic rays and temperatures over 520 million years by N. Shaviv and J. Veiser was shown previously. Below is a similar graph based on their work, but with the times of the galactic arm crossings shown.

Cosmic Ray Flux and Temperature Changes with Galactic Arm Crossings

Four switches from warm hothouse to cold icehouse conditions during the Phanerozoic are shown in variations of several degrees K in tropical sea-surface temperatures (red curve). They correspond with four encounters with spiral arms of the Milky Way and the resulting increases in the cosmic-ray flux (blue curve, scale inverted). (After Shaviv and Veizer 2003)

Temperature changes over this time range cannot be explained by the CO2 theory.

CO2 Concentrations 500 million Years

The graph shows CO2 concentration over the last 500 million years. The CO2 does not correlate with temperature. Note that when CO2 concentrations were more than 10 times present levels, about 175 million years ago and 440 million years ago, the Earth was in two very cold ice ages.

Sources:

1. Cosmoclimatology: a new theory emergespaper by Henrik Svensmark, 2007

2. Celestial driver of Phanerozoic climate?paper by Shaviv and Veizer, 2003

3. Tim Patterson's National Post July 2003 review of the Shaviv and Veizer paper

Milankovitch Cycles

The Earth-Sun orbital changes are the principal causes of long term climate change. During the last 800,000 years, eight periods of glaciations have occurred. Each ice age lasts about 100,000 years with warm interglacial periods lasting 10,000 to 12,000 years. Milutin Milankovitch (1879-1958) identified three major cyclical variables which became recognized as the major causes of climate change. The amount of solar radiation reaching the Earth depends on the distance of the Earth to the Sun and the angle of incidence of the Sun's rays upon the Earth's surface. The Earth's axis tilt changes on a 40,000-year cycle, the precession of the equinox changes on a 21,000-year cycle, and the eccentricity of the Earth's elliptical orbit changes on a 100,000-year cycle.

The Earth's axis tilt (also known as obliquity of the ecliptic) changes from 22 to 24.5 degrees over a 40,000 year cycle. Summer to winter extremes are greater when the axis tilt is greater. The precession of the equinox refers to the Earth's wobble as it spins on its axis. Currently, the north axis points to the North Star, Polaris. In 13,000 years it would point to the star Vega, then return to Polaris in another 13,000 years, creating a 26,000-year cycle. When this is combined with the advance of the perihelion (the point at which the Earth is closest in its orbit to the Sun), it produces a 21,000-year cycle. The variation of the elliptical shape of the Earth's orbit around the sun ranges from an almost exact circle (eccentricity = 0.0005) to a slightly elongated shape (eccentricity = 0.0607) on a 100,000 year cycle. The Earth's eccentricity varies primarily due to interactions with the gravitational fields of other planets. The impact of the variation is a change in the amount of solar energy from closest approach to the Sun (perihelion, around January 3) to the furthest distant to the Sun (aphelion, around July 4). Currently the Earth's eccentricity is 0.016 and there is about a 6.4 percent increase in incoming solar energy from July to January. In the Northern Hemisphere, winter occurs during the closest approach to the Sun. The graph below shows the three cycles versus time. The vertical line represents the present, negative time is the past and positive time is the future.

Analysis of deep-sea cores shows sea temperature changes corresponding to these cycles, with the 100,000-year cycle being the strongest.

These solar cycles do not cause enough change in solar radiation reaching the Earth to cause the major climatic change without an amplifier effect. A plausible amplifier is the Sun's varying solar wind that modifies the amount of cosmic rays reaching the Earth's atmosphere.

The rate of change of global ice volume varies inversely with the solar insolation due to orbital changes. The graph below compares the June solar insolation anomaly north of 65 degrees latitude to the rate of change of global ice volume over the last 750,000 years. Reconstructions of global ice volumes rely on the measurement of oxygen isotopes in the shells of foraminifera from deep-sea sediment cores. The records also in part reflect deep ocean temperatures. Two ice records are shown; SPECMAP and HW04.

The ice melting and sublimation rates are very sensitive to summertime temperatures. The strong correlations and the absence of a large time lag demonstrate essentially concurrent variations in the change of ice volumes and summertime insolation in the northern high latitudes. Both ice volume reconstructions therefore support the Milankovitch hypothesis and show that the Sun is the dominant climate driver. The graph is from the 2006 paper "In defense of Milankovitch" by G. Roe.

Heating Of The Troposphere

Computer models based on the theory of CO2 warming predicts that the troposphere in the tropics should warm faster than the surface in response to increasing CO2 concentrations, because that is where the CO2 greenhouse effect operates. The Sun-Cosmic ray warming will warm the troposphere more uniformly.

The UN's IPCC fourth assessment report includes a set of plots of computer model predicted rate of temperature change from the surface to 30 km altitude and over all latitudes for 5 types of climate forcings as shown below.

Computer Model Predicted Temperature Change

Source: Greenhouse Warming? What Greenhouse Warming? by Christopher Monckton.

The six plots show predicted temperature changes due to:

a) the Sun

b) volcanic activity

c) anthropogenic CO2 and other greenhouse gasses

d) anthropogenic ozone

e) anthropogenic sulphate aerosol particles

f) all the above forcings combined

The rate of temperature change is shown by the colour in degrees Celsius per century.

It is apparent that plot c) of warming caused by greenhouse gasses is strikingly distinct from other causes of warming. Plot f) is similar to plot c) only because the IPCC assumes that CO2 is the dominant cause of global warming.

The computer models show that greenhouse warming will cause a hot-spot at an altitude between 8 and 12 km over the tropics between 30 N and 30 S. The temperature at this hot-spot is projected to increase at a rate of two to three times faster than at the surface.

However, the Hadley Centre's real-world plot of radiosonde temperature observations shown below does not show the projected CO2 induced global warming hot-spot at all. The predicted hot-spot is entirely absent from the observational record. This shows that atmosphere warming theory programmed into climate models are wrong.

HadAT2 Radiosonde Data 1979 - 1999

Source:p.116, fig. 5.7E, CCSP HadAT2 radiosonde observations, 2006.

The left scale is atmosphere pressure in hPa and the right scale is altitude in km. The colours represent -0.6 to 0.6 °C/decade.

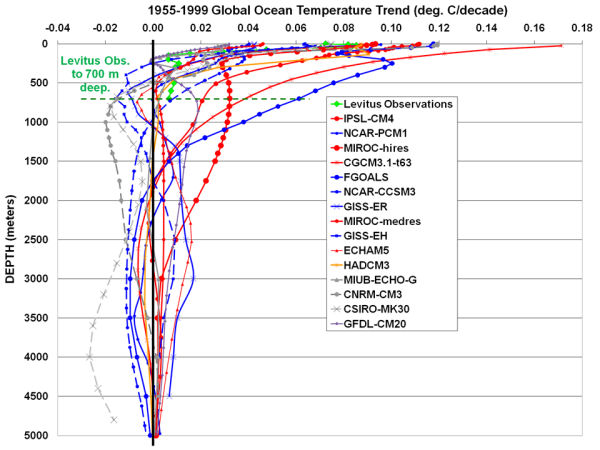

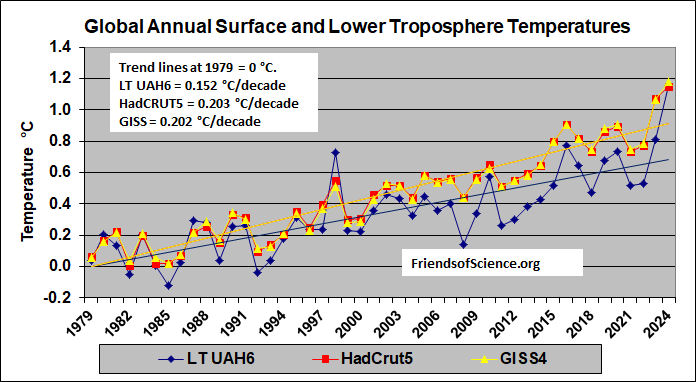

The graph below compares the global annual temperatures of the troposphere to the surface measurements. The lower troposphere measurements from the University of Alabama in Huntsville (LT UAH v.6). It measures the temperature of the troposphere up to approximately 8 km. The HadCRUT5 curve is the Land and Sea-Surface Temperatures data set from UK Met Office. The GISS4 curve is the surface temperatures from the Goddard Institute of Space Studies. The three curves are scaled so that the trend lines equals 0 degrees Celsius in 1979. The graph shows the GISS4 and HadCRUT5 temperatures increasing at 0.20 °C/decade and the lower troposphere warming at only 0.152 °C/decade. All climate models forecast the lower troposphere warming faster than the surface due to increasing water vapour. The GISS climate model has the lower troposphere (weighted the same as the satellites) warming at 130% of the surface temperatures.

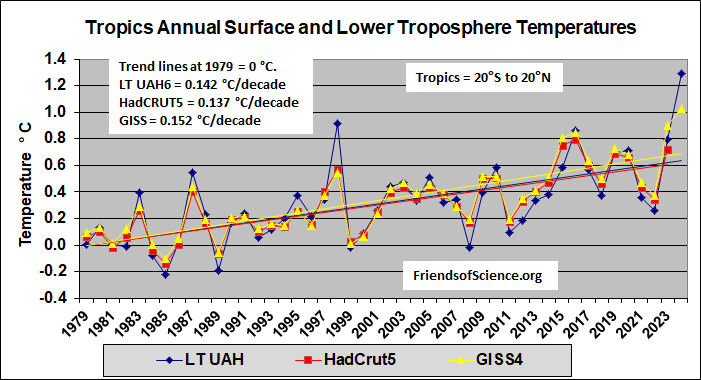

The graph below compares the annual temperatures of the troposphere to the surface measurements in the tropics. The lower troposphere data is from UAH6 and the surface data is from HadCRUT5 and GISS4. The latitude range for all three datasets is from 20 degrees North to 20 degrees South. (GISS data to Sep. 2023 only)

A comparison of the records show that the surface has warmed faster than the troposphere, the opposite of what is predicted by the theory of CO2 warming. The GISS AF model warms the lower troposphere 30% faster than at the surface.

The predicted troposphere warming response in the tropics to global warming is the fingerprint of the hypothetical positive water vapour feedback that is programmed into the climate models.

The UAH analysis is from the University of Alabama in Huntsville. It uses microwave measurement from several satellites. The IPCC projections do not agree with the data.

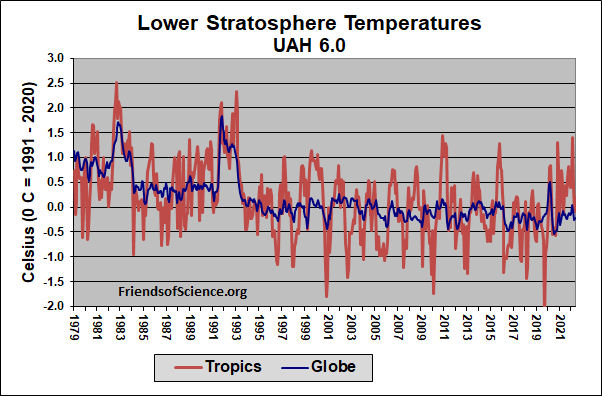

Stratospheric Cooling

The graph "HadAT2 Radiosonde Data 1979-1999" in the previous section shows that the stratosphere (above 16 km) has cooled, which might appear to indicate a greenhouse gas effect. However, stratospheric cooling is predicted to occur due to both greenhouse gasses and ozone depletion. The ozone concentration in the stratosphere has declined from 1970 until 1995, and has not declined at all since then due to the implementation of the Montreal Protocol, which limits the emission of ozone reducing CFCs. The stratosphere temperatures shown below are from here.

The lower stratosphere temperature has not declined at all since 1995 (when the ozone levels are stable or slightly increasing), so the weather balloon data does not indicate any greenhouse gas cooling of the stratosphere. In fact, it appears that there has been a slight warming of the lower stratosphere since 1995, the opposite of what is predicted by computer models of the greenhouse gas effects. The stratosphere cooling indicated by the radiosonde data is caused by the changing ozone concentration, not by greenhouse gasses.

Below is a graph of lower stratosphere temperature from satellite data for the University of Alabama in Huntsville. It shows no change in temperature from 1994 through 2015, then a small drop in 2016. The two prominent peaks in 1982 and 1991 were caused by large volcanic eruptions.

CO2 Versus The Sun Warming Theories

The following table sets out a comparison of the predictions of two climate theories - the CO2 warming theory and the Sun/Cosmic Ray theory - and actual real world data.

| Issue | Prediction - CO2 Theory | Prediction - Sun / Cosmic Ray Theory | Actual Data | Which Theory Wins |

|---|---|---|---|---|

| Antarctic and Arctic Temperatures | Temperatures in the Arctic and Antarctic will rise symmetrically | Temperatures will initially move in opposite directions | Temperatures move in opposite directions | Sun / Cosmic Ray |

| Troposphere Temperature | Fastest warming will be in the troposphere over the tropics | The troposphere warming will be uniform | The surface warming is similar or greater than troposphere warming | Sun / Cosmic Ray |

| Timing of CO2 and Temperature Changes at End of Ice Age | CO2 increases then temperature increases | Temperature increases then CO2 increases | CO2 concentrations increase about 800 years after temperature increases | Sun / Cosmic Ray |

| Temperature correlate with the driver over last 400 year | na | na | Cosmic ray flux and Sun activity correlates with temperature, CO2 does not | Sun / Cosmic Ray |

| Temperatures during Ordovician period | Very hot due to CO2 levels > 10X present | Very cold due to high cosmic ray flux | Very cold ice age | Sun / Cosmic Ray |

| Other Planets' Climate | No change | Other planets will warm | Warming has been detected on several other planets | Sun / Cosmic Ray |

IPCC And Model Projections

Intergovernmental Panel on Climate Change (IPCC) presents projections of climate change, which are based on computer models. The Fifth Assessment Report (AR5) Working Group 1, "Climate Change 2013: The Physical Science Basis" was published online here on January 30, 2014. The projections given in the report are based on four scenarios, or Representative Concentration Pathways (RCP) which include different assumptions of CO2 and other greenhouse gas emissions. The names of the scenarios correspond to different target forcings at 2100 (compared to 1750), 2.6, 4.5, 6.0 and 8.5 W/m2. These RCPs replace the emission scenarios used in the fourth assessment report.

RCP2.6 is a strong mitigation scenario.

RCP4.5 is a mitigation scenario where radiative forcing is stabilized before 2100.

RCP6.0 is a slower mitigation scenario where radiative forcing is stabilized after 2100.

RCP8.5 is an extreme emissions scenario where greenhouse gas emissions rate increases.

The graph below shows the CO2 concentration in air to the year 2050 for each RCP scenario. The light blue curve is the historical CO2 concentrations.

The CO2 concentrations of RCP2.6, RCP4.5 and RCP6.0 are similar up to 2030. RCP2.6 CO2 stabilizes shortly after 2040. The actual CO2 concentration increased at 0.54%/year from 2005 to 2013. The RCP8.5 CO2 concentrations increase at 1.00%/year by 2050, and at 1.16%/year by 2070, which is more than double the historical growth rate.

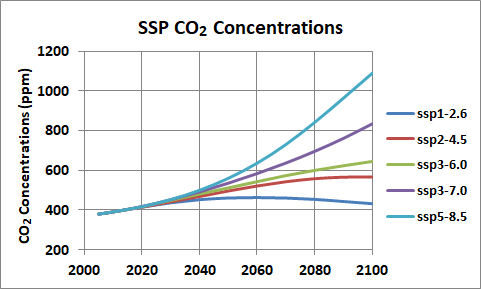

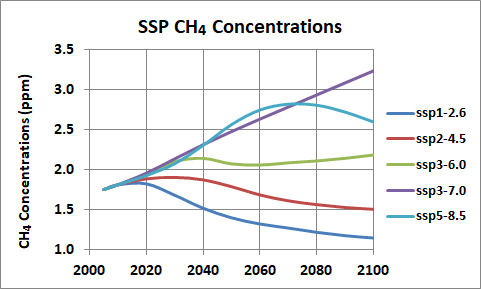

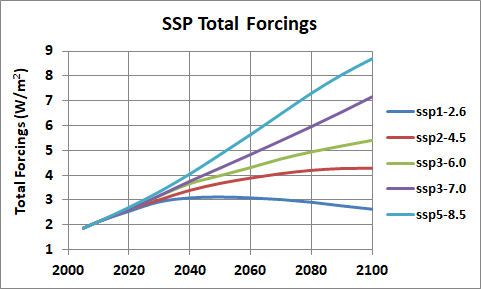

The IPCC's Sixth Assessment Working Group 1 Report "Climate Change 2021 The Physical Science Basis" was published August 2021. It replaces the RCP emissions scenarios with a new range of scenarios based on Shared Socio-economic Pathways. A scenario is a description of how the future may develop, based on a set of assumptions about key drivers including demography, economic processes, technological innovation and governance. These assumptions are combined with assumptions of mitigation actions to produce scenarios of emissions of greenhouse gases, aerosols and other climate drivers. Section 1.6.1 of WGI says "Scenarios are not predictions; instead, they provide a ‘what-if’ investigation of the implications of various developments and actions."

The emissions of CO2 by the five main scenarios are shown below. The emissions are from fossil fuel, industry (cement) and land use changes. They are in gigatonnes of CO2 per year (Gt/yr). The illustrative scenarios are referred to as SSPx-y, where ‘SSPx’ refers to the Shared Socio-economic Pathway or ‘SSP’ and ‘y’ refers to the approximate level of radiative

forcing (in W/m2) resulting from the scenario in the year 2100.

The corresponding CO2 concentration, based on the climate models' average carbon cycle, is shown below in parts per million (ppm).

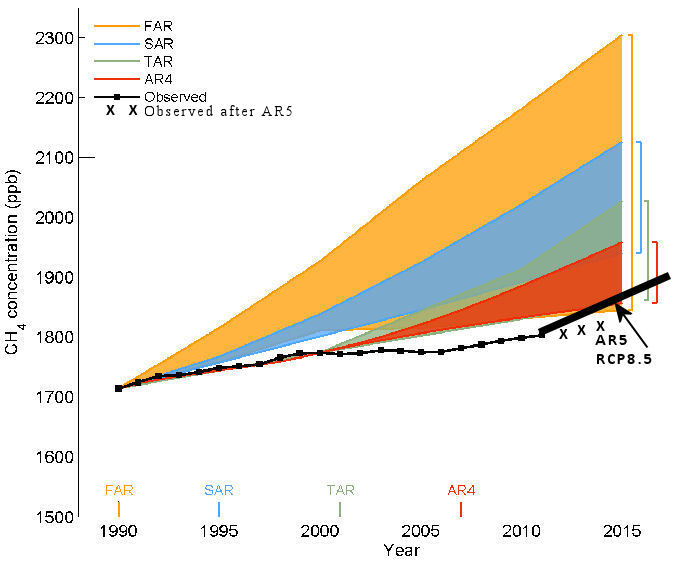

The methane (CH4) concentrations are shown below.

Increasing human-caused greenhouse gas concentrations produce increasing radiative forcings in W/m2 at the top of the atmosphere compared to pre-industrial times.

The SSP3-7.0 and SSP5-8.5 scenarios are extreme and unrealistic scenarios as both CO2 and CH4 concentrations increases much faster than the historical changes. While the 2nd number of the ssp scenario name is supposed to be the forcing at 2100, in fact the ssp4-6.0 has a 2100 forcing of only 5.14, much less than 6.0 W/m2. Ssp5-8.5 has a 2100 forcing of 8.70 W/m2.

Global Mean Surface Temperature Change degrees Celsius

Below is a table of temperature projections from AR5.

Relative to 1986 to 2005 |

Relative to 2013 |

|||

| Scenario | 2055 | 2090 | 2055 | 2090 |

| RCP2.6 | 1.0 | 1.0 | 0.8 | 0.8 |

| RCP4.5 | 1.4 | 1.8 | 1.2 | 1.6 |

| RCP6.0 | 1.3 | 2.2 | 1.1 | 2.0 |

| RCP8.5 | 2.0 | 3.7 | 1.8 | 3.5 |

The graph below from figure SPM.8 of AR6 WG1 Summary for Policy Makers shows the temperature projections to 2100. Very likely ranges are shown by shading for SSP1-2.6 and SSP3-7.0.

Kevin Trenberth is head of the large US National Centre for Atmospheric Research and one of the advisors of the IPCC. Trenberth asserts ". . . there are no (climate) predictions by IPCC at all. And there never have been". Instead, there are only "what if" projections of future climate that correspond to certain emissions scenarios. According to Trenberth, GCMs ". . . do not consider many things like the recovery of the ozone layer, for instance, or observed trends in forcing agents. None of the models used by IPCC is initialised to the observed state and none of the climate states in the models corresponds even remotely to the current observed climate." However, Scott Armstrong and Kesten Green audited the relevant chapter in the IPCC's latest report. They find that "in apparent contradiction to claims by some climate experts that the IPCC provides 'projections' and not 'forecasts', the word 'forecast' and its derivatives occurred 37 times, and 'predict' and its derivatives occur 90 times" in the chapter. Consequently, it is not surprising that the public has this misimpression that the IPCC predicts future climate.

Computer Models Fail

The IPCC assumes that the Sun has little effect, even though observational evidence clearly shows the Sun has a significant effect on climate.

The models assume the 20th century temperature rise is caused by only greenhouse gas concentration increases, and parameters are set in the models to make the temperature rise in response to the greenhouse gas forcing. The direct effect of increasing CO2 concentration on global warming is very small. All the models amplify an initial increase in temperature due to CO2 by employing water vapour and clouds as a large positive feedback. However, there is no evidence that water vapour and clouds provides a large positive feedback. They may provide a negative feedback.

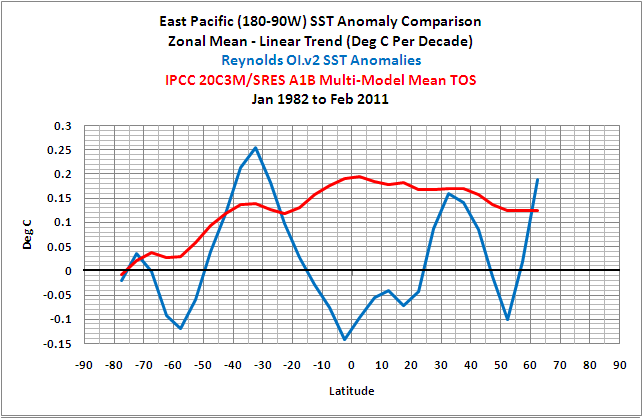

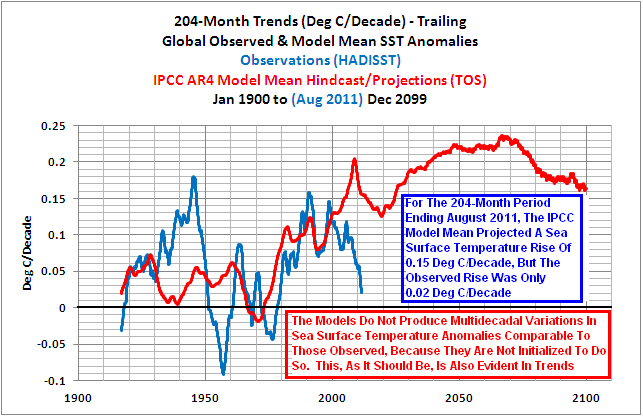

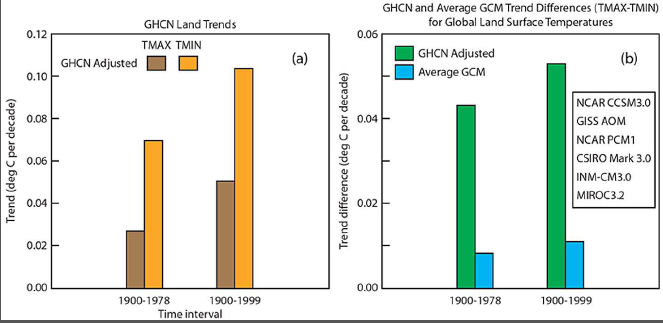

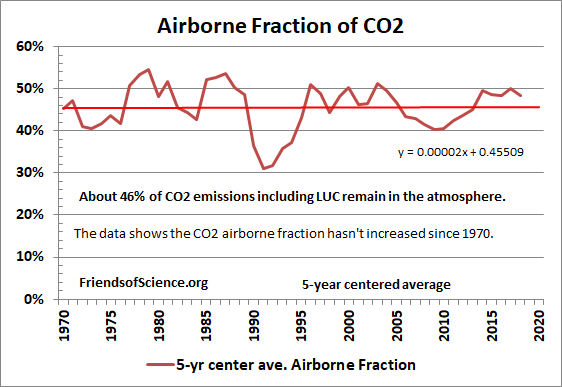

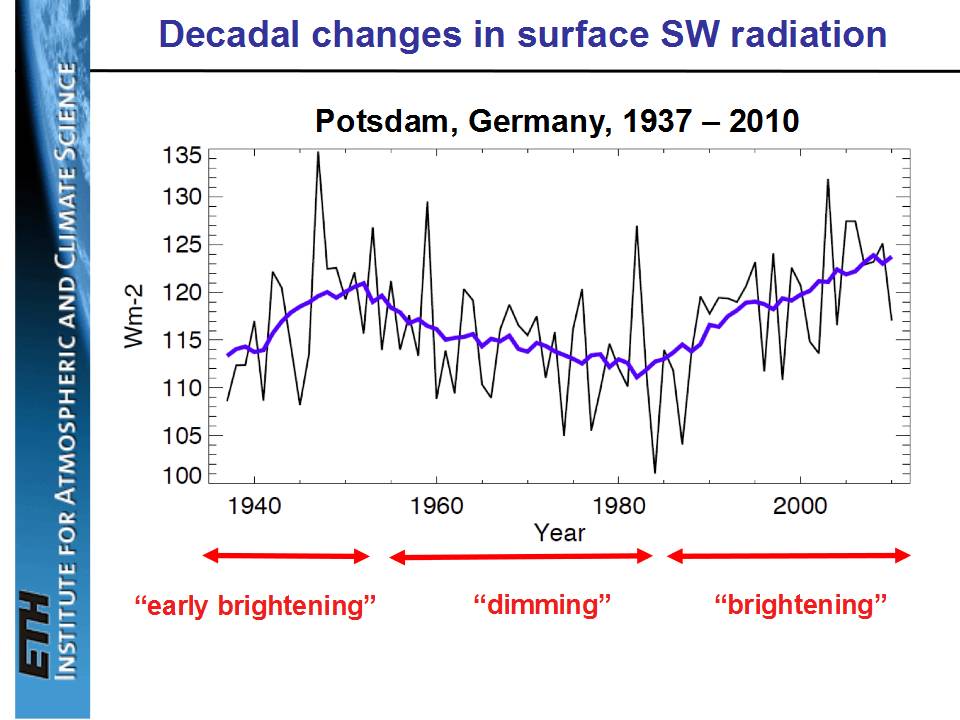

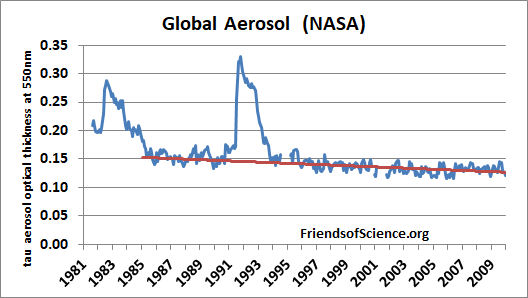

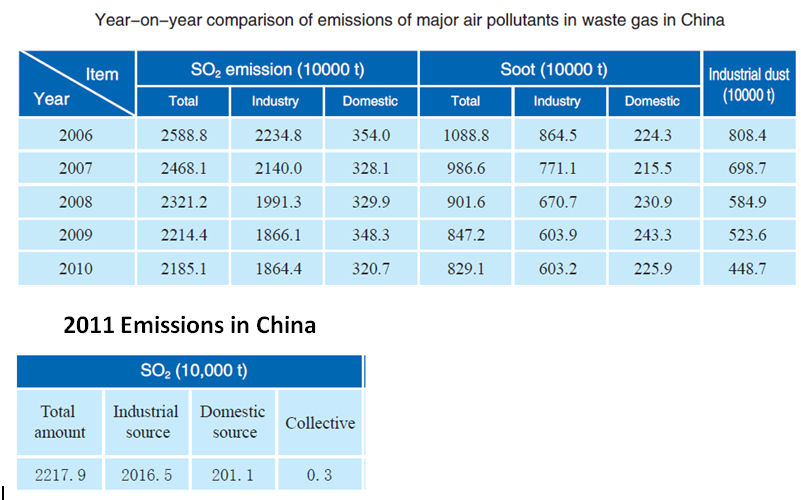

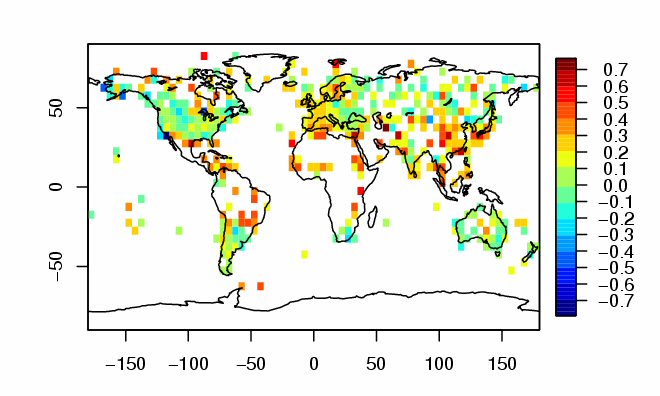

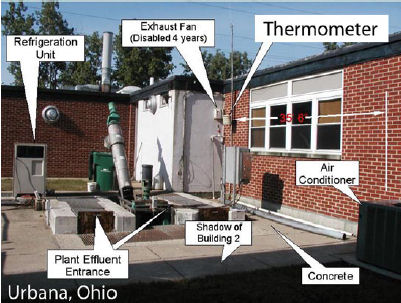

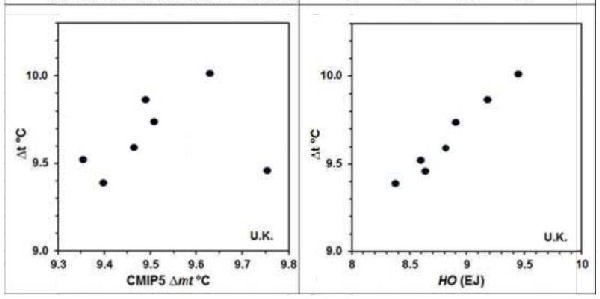

Climate models utilize large grid blocks to simulate climate, which are too large to include thunderstorms or hurricanes, so they use parameterization to account for these. These parameterizations ignore real-world transfers of energy, moisture and momentum that could significantly alter the results and severely limits the usefulness of climate model projections. Computer models employ approximations to represent physical processes that cannot be directly computed due to computational limitations. Because many empirical parameters can be selected to force a model to match observations, the ability of a model to match observations cannot be cited as evidence that the model is realistic and does not imply it is reliable for forecasting climate. See the Fraser Institutes Independent Summary For Policy Makers.